If you want to find solutions online, stop using Google.

Sometimes I post stuff to my blog about things that I could not find a satisfying solution to and where I had to figure one out myself. I post those things because I want it to be discoverable by the next person who is searching for it.

I did a quick test, and my posts don’t show up anywhere on Google. I can find them via Kagi, DuckDuckGo, and even Bing. But Google doesn’t show my stuff, even when hitting specific keywords that only my post talks about. And if my site even shows up, it is only about +6 months after I posted.

Even tried their search console thing, it doesn’t report any issues with my site. So it must be the lack of ads, cookies, and AI generated content which makes Google suspicious of it.

So, If you are an engineer looking for solutions to your problems online, just stop using Google. It’s become so utterly useless, it’s ridiculous. Of course you will miss all the cool AI features and scam ads, but there’s always some drawbacks.

_Reposting my post from Mastodon yesterday, it felt relevant. https://infosec.exchange/@hertg/112989703628721677_

I gave up on Google over a decade ago - maybe two decades by now. Way back when I was using Yahoo, Ask Jeeves, Astalavista, and others. When Google came, it somehow beat them all at finding exactly what I was looking for.

Later they stopped searching for the exact words you typed, but it was okay because adding a plus in front of terms, or quotes around phrases, still let you search exact things. The combination of both systems was very powerful.

And then plus and quotes stopped working. Boolean operators stopped working. Their documentation still says they work, but they don’t.

Now, it seems like your input is used only as a general guideline to pick whatever popular search is closest to what it thinks you meant. Exact words you typed are often nowhere in the page, not even in the source.

I only search Google maps now, and occasionally Google translate.

and occasionally Google translate

deepl.com is a decent alternative, if you want to replace Google Translate

Thanks for the suggestion. I’ve heard of it, but haven’t tried yet - but I will.

I always preferred deepl for translations. That is until I started using chatgpt which seem to usually do a much better job than either google or deepl (for the languages I have tried).

So it must be the lack of ads, cookies, and AI generated content which makes Google suspicious of it

If your site isn’t integrated with Google’s Adsense infrastructure it is immediately at a disadvantage as Google won’t scan your site anywhere near as often, and even when it does will put the results way below anything else that does. It’s not that it finds your site suspicious, it is simply incentivized to really look hard at all the other sites paying for service.

I totally came to say this. Google has become designed to tarpit you into staying on the site longer. They no longer have the goal of giving you what you want quickly, they want you to see more ads.

Google makes $307/yr. per user. They are strongly motivated to tarpit us. If we want a clean search experience we need to be open to the idea of paying.

As an embedded systems dev that searches a lot of obscure stuff, I use Kagi and love it. Go try its free searches and see for yourself.

If you value your time and mental stability, please do yourself a favor and go see what clean, high quality search results look like on Kagi.

Google used to better than ddg like a year ago, now it’s almost completely unusable for development and I find myself going back to ddg and actually finding what I want instead of some unrelated nonsense, ads and LLM output crap

It kinda depends what you’re looking for. For technical stuff DDG is much better than Google (I don’t know about kagi) but for local information Google still gives better results. Google seems to return what it thinks you want to search for and not what you actually tell it to so for exact searches it’s always worse. If you’re not sure what you’re looking for Google can be better sometimes.

Please try kagi.

Not saying it’s you, but some people that think paying for Search is silly have forgotten how wonderful clean searches with actual answers are.

I’ve gotten pretty used to DDG’s interface. I’ll try kagi at some point but I don’t know if I’d use it full time.

that’s because google is geared towards selling shit, not locating articles on the internet.

DDG shits me with its “oh a - symbol means show less of something” no it means I want it fucking elimated you fl00n.

Kagi seems to surface great independent content and I’ve been loving it.

Google’s been heading that way for a while, but goddamn this last month it’s completely shat the bed.

My fave was the other night whrn a search for ‘vast visual auditory sensory theatre’ (literal artist and album) gave me top results of scholarly articles. Trying to google song lyrics gave me fucking screen issues. Utterly useless now.

Yandex gives some fun results, when was the last time you saw non-reddit forum posts pop up?

Switched to Perplexity a year ago, but I occasionally still go to Google just from muscle memory. Google’s aggressively unhelpful now - it’s kinda insane.

In contrast to my experience, all the other search engines stink. Google is the only good one. But I suggest using a frontend like Araa if you want privacy.

That’s in a different book.

I’m quite fond of

I wouldn’t recommend talking to your cat about Satanism. The best bet is to just hope they never find out about it.

And on shopping list

Hold up, this exists?

Adding to my library

Here’s my collection: https://imgur.com/a/kAB0p

Looks like this website has a far larger collection: https://boyter.org/2016/04/collection-orly-book-covers/

Man, the world needs that fly book.

Love them all.

I’m stealing these

As I have stolen these, now you too shall carry on the tradition.

Thank you master, I will not disappoint you.

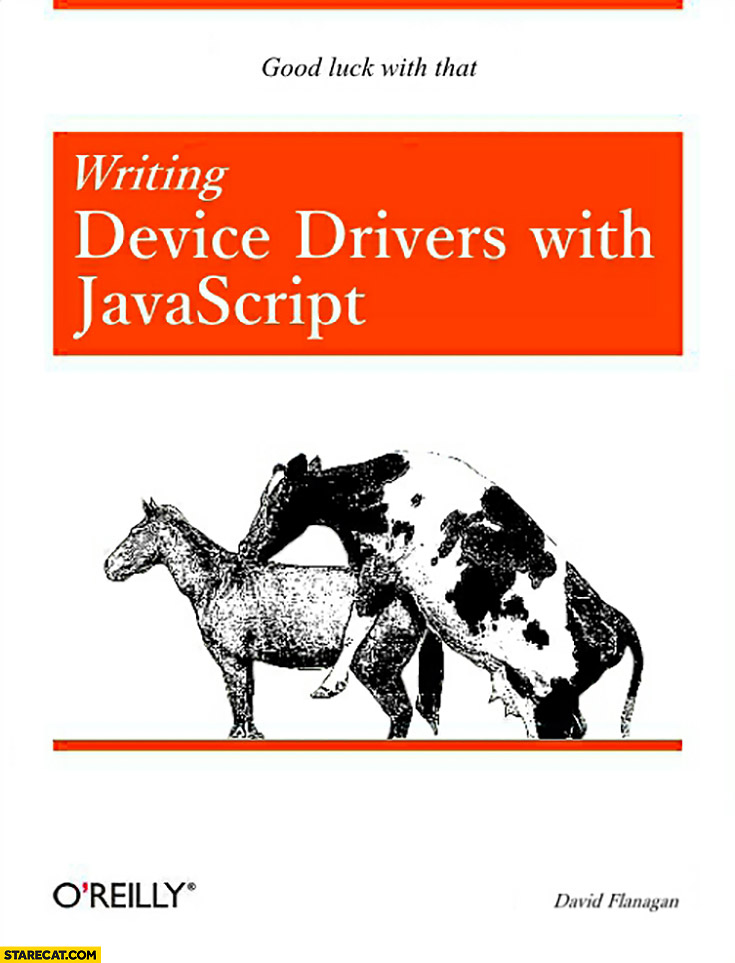

It burns

webassembly

perhaps javascript -> rust?

[off topic]

I’ve been telling people to read ‘Cryptonomicon’ for decades.

It tells two interwoven stories. Back in WW2 the grandfather is involved in proactive missions to keep the Nazis from learning the Allies had broken their codes, and in the late 1990’s the grandson is trying to start an online bank

This book also basically predicted cryptocurrency (but not the blockchain stuff)

I had a long flight so I took along Reamde.

I’d read it twice before, but damned if it didn’t hook me up again. I got to the first airport around 10 am and didn’t get home until 3 am. Saved my sanity.

For the uninitiated, Reamde involves the kidnapped niece of a billionaire game maker; the game is really fun and also a money laundering operation. There are terrorists, Right Wing militias, undercover spies, demented science fiction authors, and a bear skin rug.

My library estimates I’ll be able to read it in 7 weeks, so thanks for the future surprise I’ll forget about until then.

I remembering stumbling into this book at a shop a while back, reading the synopsis on the back and then the first page thinking “This should be fun.” I was not prepared for how enthralling and deep the story went. Perfect travel book, loved reading it on flights. Would be amazing to see it adapted as an HBO show, especially the hunt in the forest towards the end. I was laughing so hard!

He just casually invented cryptocurrency as a plot device.

*(char*)0 = 0; - What Does the C++ Programmer Intend With This Code? - JF Bastien - C++ on Sea 2023

How would you use that for debugging?

(Sry I’m too cheap to go and buy the book)

My best guess is that in some configurations it raises SIGSEGV and then dumps core. Then, you use a debugger to analyse the core dump. But then again you could also set a breakpoint, or if you absolutely want a core dump, use abort() and configure SIGABRT to produce a core dump.

to see whether your code has executed a certain path (like printf(“here”) but as a crash)

Googling doesn’t work any more, they’re incentivised to keep you searching fruitlessly for as long as possible so they can serve more ads

Book for new programmers: How to properly prompt ChatGPT to solve errors.

Or dont read the book and paste your question in chatGPT

Better yet, have your LLM of choice read the book first.

They don’t really have a long term memory, so it’s probably not going to help.

If we’re speaking of transformer models like ChatGPT, BERT or whatever: They don’t have memory at all.

The closest thing that resembles memory is the accepted length of the input sequence combined with the attention mechanism. (If left unmodified though, this will lead to a quadratic increase in computation time the longer that sequence becomes.) And since the attention weights are a learned property, it is in practise probable that earlier tokens of the input sequence get basically ignored the further they lie “in the past”, as they usually do not contribute much to the current context.

“In the past”: Transformers technically “see” the whole input sequence at once. But they are equipped with positional encoding which incorporates spatial and/or temporal ordering into the input sequence (e.g., position of words in a sentence). That way they can model sequential relationships as those found in natural language (sentences), videos, movement trajectories and other kinds of contextually coherent sequences.

Yep.

I’d still call that memory. It’s not the present; arguably for a (post-training) LLM the present totally consists of choosing probabilities for the next token, and there is no notion of future. That’s really just a choice of interpretation, though.

During training they definitely can learn and remember things (or at least “learn” and “remember”). Sometimes despite our best efforts, because we don’t really want them to know a real, non-celebrity person’s information. Training ends before the consumer uses the thing though, and it’s kind of like we’re running a coma patient after that.

When ya upload a file to a Claude project, it just keeps it handy, so it can reference it whenever. I like to walk through a kind of study session chat once I upload something, with Claude making note rundowns in its code window. If it’s a book or paper I’m going to need to go back to a lot, I have Claude write custom instructions for itself using those notes. That way it only has to refer to the source text for specific stuff. It works surprisingly well most of the time.

Yeah, you can add memory of a sort that way. I assume ChatGPT might do similar things under the hood. The LLM itself only sees n tokens, though.

YA RLY

Correct. 6000 hulls.

If you’re asking for real, Designing Data-Intensive Applications is probably the best I’ve ever read (if you’re into that kind of thing)

I’ve been trying for years to complete this, but it’s very weighty and I keep forgetting minor concepts every now and then. I’m still stuck at the Replication chapter.

Yes indeed it’s pretty technical and content-heavy. It helped me that I took a class in university that covered many of the topics, still a few things went over my head. Maybe it’s time I do a re-read of selected chapters

I’ll keep in mind getting pdf

Man! I need a dozen of this in paper format to send around.

The best book is either Consider Phlebas by Iain Banks, or Fine Structure by Sam Hughes.

Oh, you meant programming books. Maybe still try Sam Hughes, it’ll probably be more blog post than book, though

Edit: You might also like Ra by Sam Hughes; it’s magic as a field of science/engineering, and spells have programming-like syntax. Spoiler: ‘magic’ is not actually magic