- cross-posted to:

- [email protected]

- cross-posted to:

- [email protected]

After several months of reflection, I’ve come to only one conclusion: a cryptographically secure, decentralized ledger is the only solution to making AI safer.

Quelle surprise

There also needs to be an incentive to contribute training data. People should be rewarded when they choose to contribute their data (DeSo is doing this) and even more so for labeling their data.

Get pennies for enabling the systems that will put you out of work. Sounds like a great deal!

All of this may sound a little ridiculous but it’s not. In fact, the work has already begun by the former CTO of OpenSea.

I dunno, that does make it sound ridiculous.

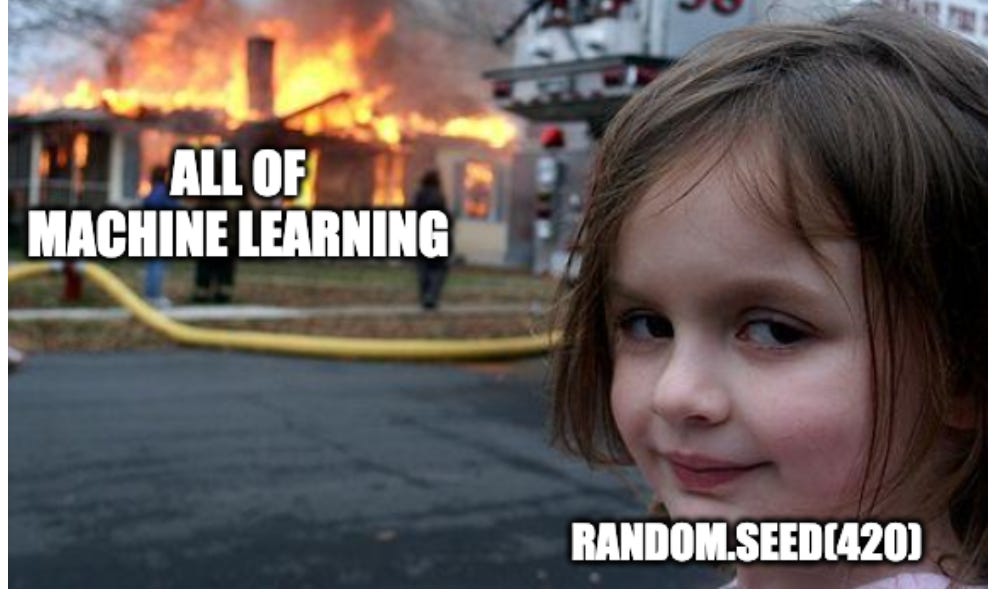

this thing was a fucking slog of bad ideas and annoying memes so I skimmed it, but other than the obvious (blockchains are way too inefficient for any of this to come even close to working), did the author even mention validation? cause if I’m contributing my own data and my own tags then I’m definitely going to either insert a whole bunch of nonsense into the model to get paid, or use an adversarial model to generate malicious data and weights and use that to break the model. the way crypto shitheads fix this is with a centralized oracle, which means all of this is just a pointless exercise in combining the two most environmentally wasteful types of grift tech ever invented

Considering the mix of bad ideas, I suppose the AI model will check itself. Maybe by mining.