They are referencing this paper: LMSYS-Chat-1M: A Large-Scale Real-World LLM Conversation Dataset from September 30.

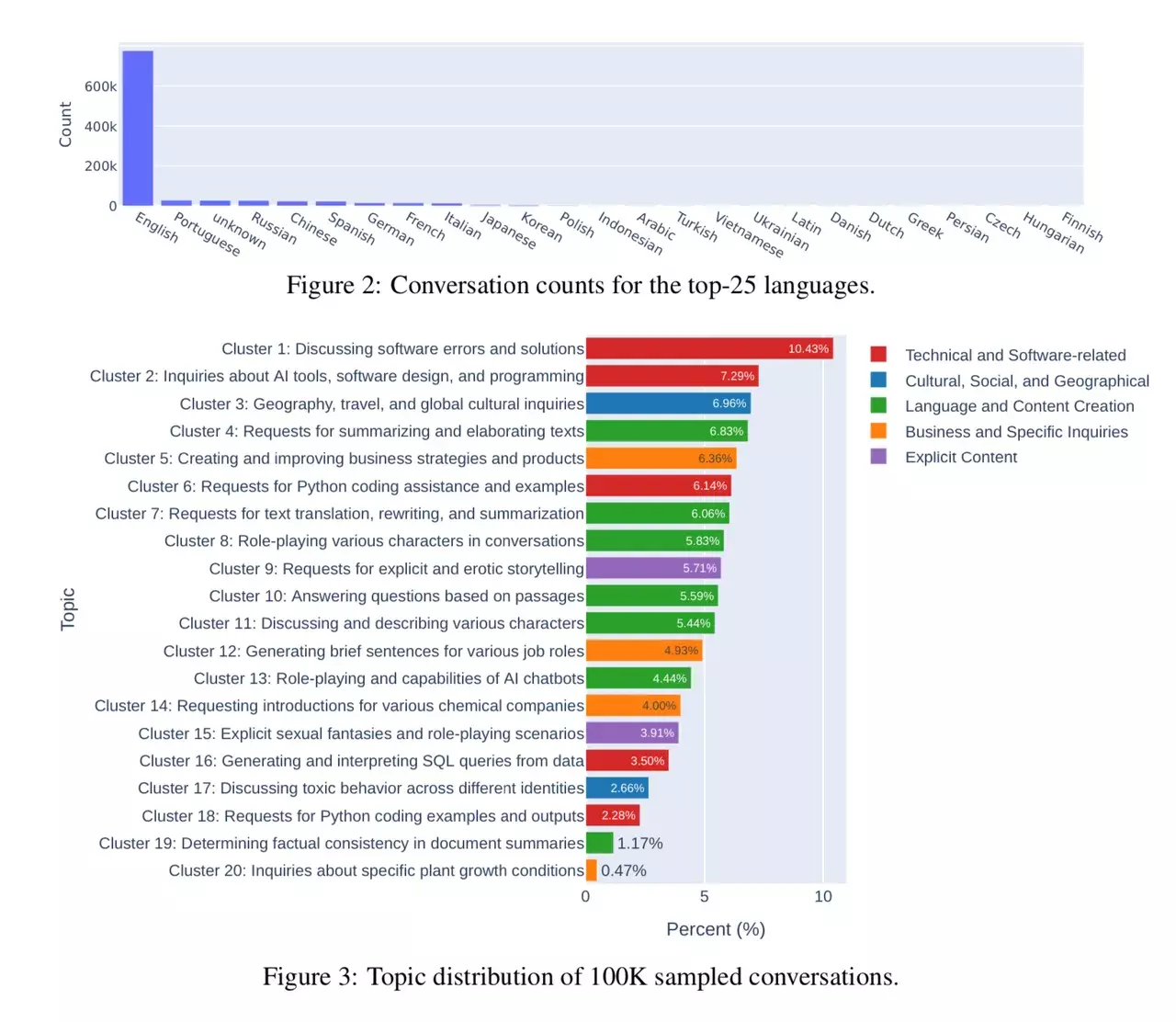

The paper itself provides some insight on how people use LLMs and the distribution of the different use-cases.

The researchers had a look at conversations with 25 LLMs. Data is collected from 210K unique IP addresses in the wild on their Vicuna demo and Chatbot Arena website.

I’m always asking myself what the community uses their local LLMs for. I believe it is one of the selling points of doing inference at home, since the major LLM services like ChatGPT don’t allow explicit content.

Tools like Replika AI that were used for companionship and explicit content in the early days, have had troubles with that and prevented such use. Nonetheless for people who want to engage in that kind of activity, there are projects like SillyTavern, Oobabooga’s Chat UI with lots of NSFW character cards available. And a week ago KoboldAI released another (good) fine-tune for this kind of activity, called LLaMA2-13B-Tiefighter.

As I use LLMs for that 10% use-case, I like them to contain knowledge about those concepts. Sexuality is part of the human world and I’m more surprised that it’s only 10%. (insert the internet is for porn reference)

But people have different opinions. I’ve read this article a few days ago: Men Are Creating AI Girlfriends and Then Verbally Abusing Them

IMO, local LLMs lack the capabilities or depth of understanding to be useful for most practical tasks (e.g. writing code, automation, language analysis). This will heavily skew any local LLM “usage statistics” further towards RP/storytelling (a significant proportion of which will always be NSFW in nature).

That is also my observation. Even for (simple) tasks like summarization, I’ve seen LLMs insert to much inaccurate information to be useful for my own life. The tasks I see are somewhat narrow and require a human in the loop. Despite some people claiming we’re close to AGI.