They are referencing this paper: LMSYS-Chat-1M: A Large-Scale Real-World LLM Conversation Dataset from September 30.

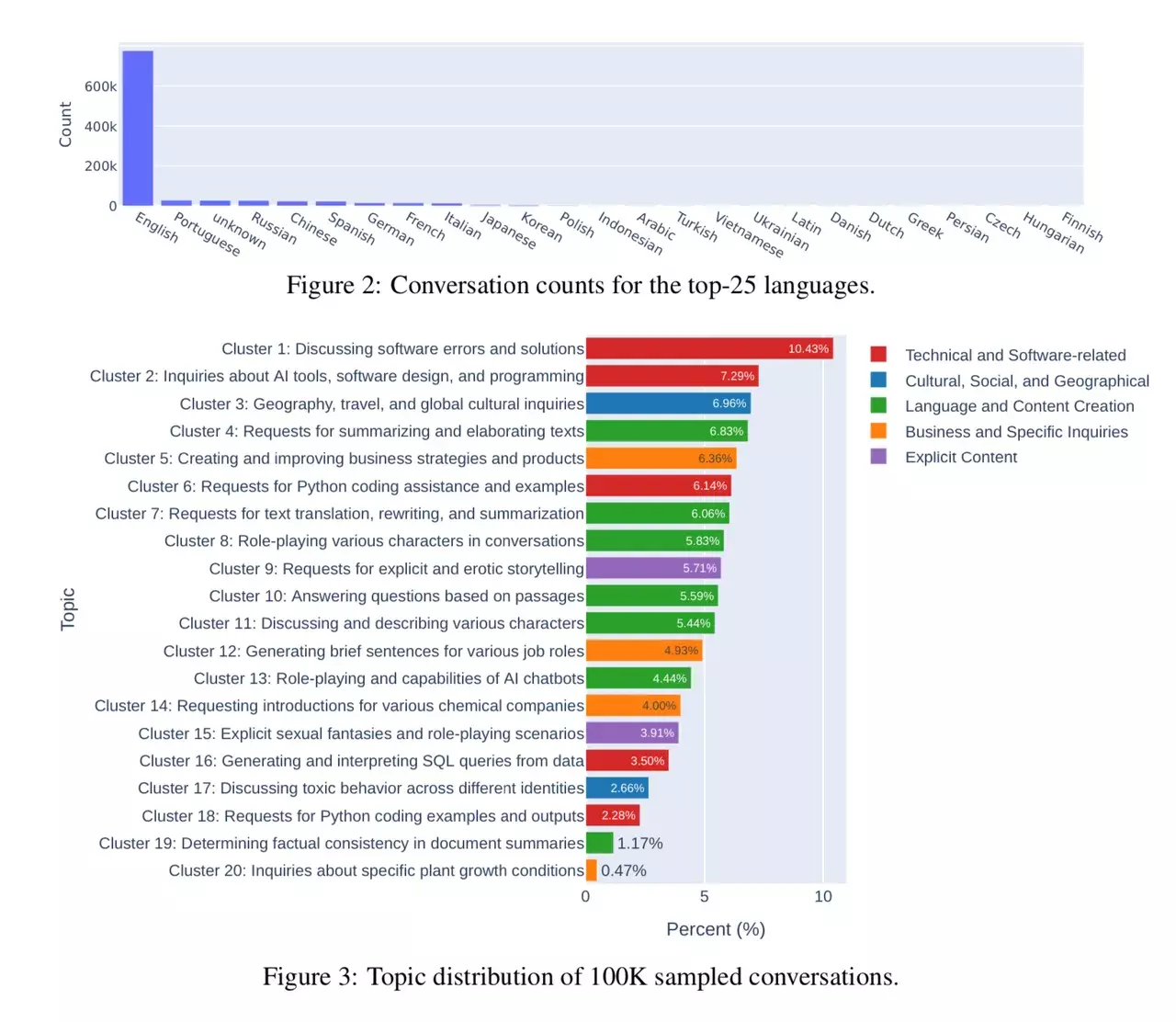

The paper itself provides some insight on how people use LLMs and the distribution of the different use-cases.

The researchers had a look at conversations with 25 LLMs. Data is collected from 210K unique IP addresses in the wild on their Vicuna demo and Chatbot Arena website.

Lol @ people making AI’s horny while I’m just trying to use them as free therapists for my many mental issues.

Queue “we are not the same” meme.

I’ve heard people use it for that. If you’re comfortable sharing this information: Does it help? Do you use it for advice or to have someone/-thing listen to you and write down the stuff that’s weighing on you? Or companionship? And is there a place for people like that? I’ve seen a few character cards for therapists but most characters out there are either pop culture or nsfw stuff (or both) …

Hey man,

My experiences with it are kinda double edged. On the one hand, explaining your situation and feelings to chatgpt is kind of cathartic since you’re sitting down writing and thinking of them. Gpt will usually analyse a bit and offer some “options” ranging from totally realistic to wildly imagined. You can then pick the points you want to further analyze and go from there, sometimes with surprising results.

On the other hand, I’ve ended many such a conversation on GPT’s note that they’re not a mental health specialist and that they can offer no more aid or insight.

Yeah, I don’t really like the way ChatGPT talks to me. It’s a) lecturing me too much. And b) has quite a distinct tone of voice and choice of words and always borders on sounding pretentious. Which I don’t really like. But I have all the Llamas available and I like them better.

Thx for the insight!

Have you seen ehartford’s Samantha models? Sounds kind of what you’re looking for, except it’s a fine-tune rather than just a character card. He’s made multiple versions based on different base models, so have a look at huggingface to find one that fits your hardware. From the model card:

Mmh. I’ve disregarded Samantha because of the “she will not engage in […]”. Lemme give her a chance, then ;-)

I think that mostly applies when you combine it with the Samantha character description in the prompt, but if you substitute it for a different character card the model itself doesn’t feel heavily censored or anything. Personally I like it as an RP model because it isn’t hypersexual like many others are. And while Mistral-7b is very competent as an AI assistant I don’t think it’s great for RP, so I tend to prefer fine-tunes of llama-2-13b for conversations.

Ah, nice. Yeah I’ve had some success with Mistral 7B Claude and Mistral 7B OpenOrca (I believe there are newer and better ones out since) I really like the speed of the 7B. And they engage in roleplay/dialogue and are -in my eyes- surprisingly smart. It understood how to roleplay a bit more complicated characters with likes and dislikes and personality quirks (within limits). But you’re right. There is a difference to a Llama2 with more parameters. If you go away from the ‘normal’ smart assistant chatbot usage you’ll notice. I also compared the german-speaking finetunes of Mistral and Llama2 and you can kinda tell Mistral hasn’t seen much text outside of english in it’s original dataset.