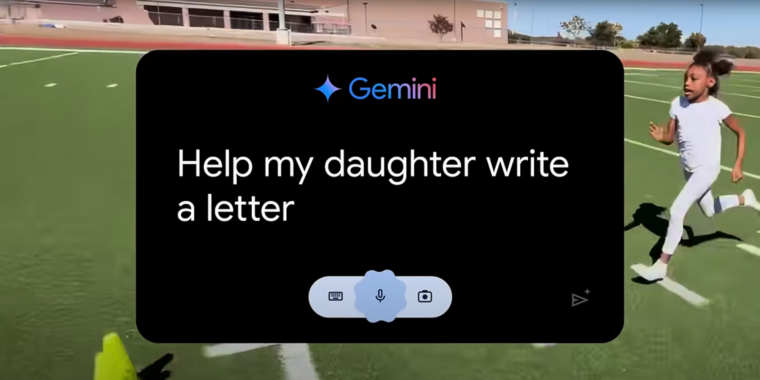

If you’ve watched any Olympics coverage this week, you’ve likely been confronted with an ad for Google’s Gemini AI called “Dear Sydney.” In it, a proud father seeks help writing a letter on behalf of his daughter, who is an aspiring runner and superfan of world-record-holding hurdler Sydney McLaughlin-Levrone.

“I’m pretty good with words, but this has to be just right,” the father intones before asking Gemini to “Help my daughter write a letter telling Sydney how inspiring she is…” Gemini dutifully responds with a draft letter in which the LLM tells the runner, on behalf of the daughter, that she wants to be “just like you.”

I think the most offensive thing about the ad is what it implies about the kinds of human tasks Google sees AI replacing. Rather than using LLMs to automate tedious busywork or difficult research questions, “Dear Sydney” presents a world where Gemini can help us offload a heartwarming shared moment of connection with our children.

Inserting Gemini into a child’s heartfelt request for parental help makes it seem like the parent in question is offloading their responsibilities to a computer in the coldest, most sterile way possible. More than that, it comes across as an attempt to avoid an opportunity to bond with a child over a shared interest in a creative way.

Talking to a rubber duck or writing to a person who isn’t there is an effective way to process your own thoughts and emotions

Talking to a rubber duck that can rephrase your words and occasionally offer suggestions is basically what therapy is. It absolutely can help me process my emotions and put them into words, or encourage me to put myself out there

That’s the problem with how people look at AI. It’s not a replacement for anything, it’s a tool that can do things that only a human could do before now. It doesn’t need to be right all the time, because it’s not thinking or feeling for me. It’s a tool that improves my ability to think and feel

well I am pretty sure Psychologists and Psychiatrists out there would be too polite to laugh at this nonsense.

Precisely, you are giving it a TON more credit than it deserves

At this point, I am kind of concerned for you. You should try real therapy and see the difference

Psychiatrists don’t generally do therapy, and therapists don’t give diagnoses or medication

Therapy is a bunch of techniques to get people talking, repeating their words back to them, and occasionally offering compensation methods or suggesting possible motivations of others. Telling you what to think or feel is unethical - therapy is about gently leading you to the realizations yourself. They can also provide accountability and advice, but they don’t diagnose or hand you the answer - people circle around their issues and struggle to see it, but they need to make the connections themselves

I don’t give AI too much credit - I give myself credit. I don’t lie to myself, and I don’t have trouble talking about what’s bothering me. I use AI as a tool - these kinds of conversations are a mirror I can use to better understand myself. I’m the one in control, but through an external agent. I guide the AI to guide myself

An AI is not a replacement for a therapist, but it can be an effective tool for self reflection