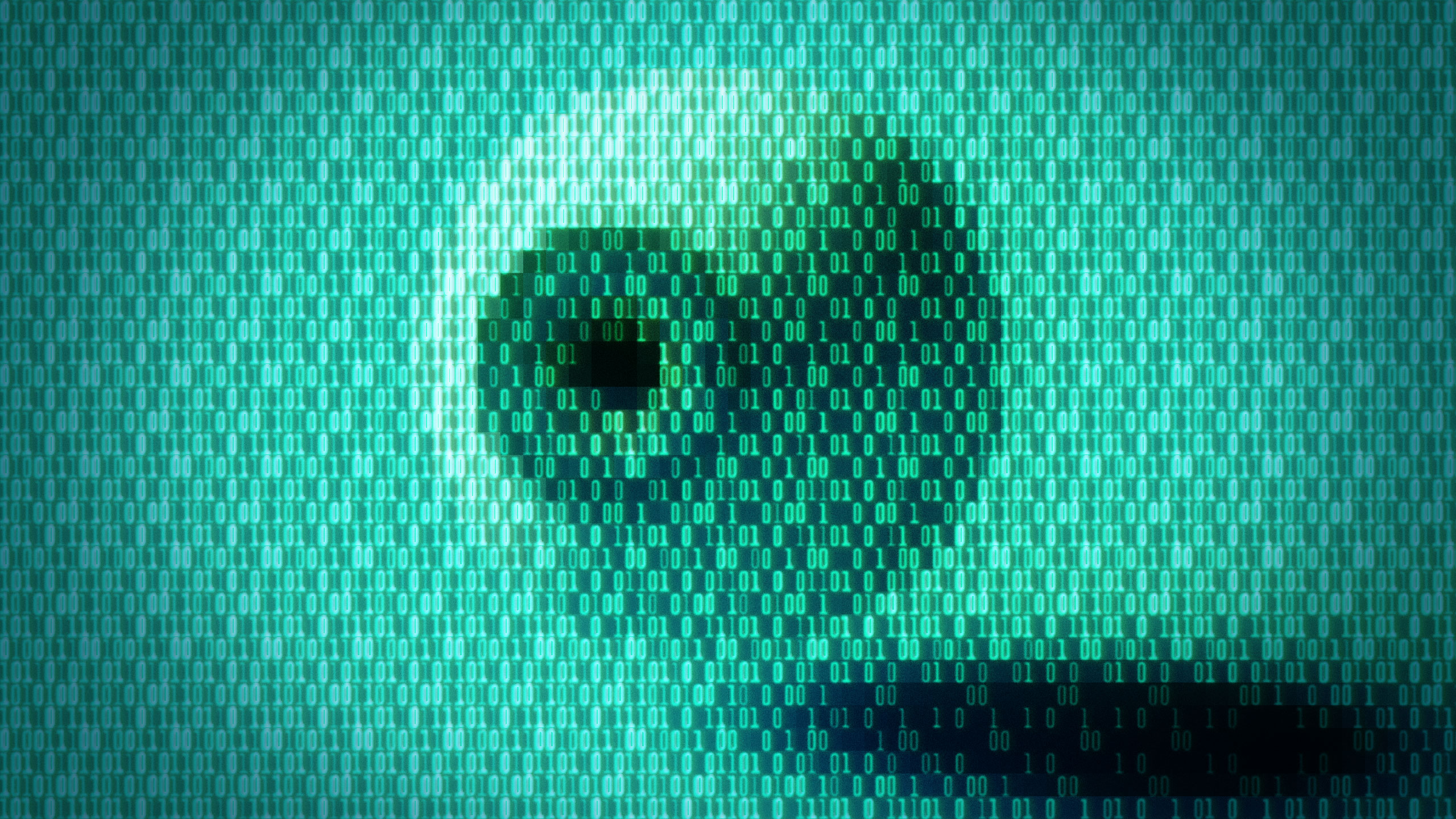

What if there was a way to sneak malicious instructions into Claude, Copilot, or other top-name AI chatbots and get confidential data out of them by using characters large language models can recognize and their human users can’t? As it turns out, there was—and in some cases still is.

The invisible characters, the result of a quirk in the Unicode text encoding standard, create an ideal covert channel that can make it easier for attackers to conceal malicious payloads fed into an LLM. The hidden text can similarly obfuscate the exfiltration of passwords, financial information, or other secrets out of the same AI-powered bots. Because the hidden text can be combined with normal text, users can unwittingly paste it into prompts. The secret content can also be appended to visible text in chatbot output.

The result is a steganographic framework built into the most widely used text encoding channel.

On the other hand, could we require LLMs to include hidden characters in their output as a way to fingerprint them (and cut down on student copy/paste cheating)?

Sure, we could. Make kids do the extra step of copying their chatgpt answer into LLMScrubber.com to get the hidden character-free version

deleted by creator

We can do both of these things at the same time; kinda like teaching kids that wikipedia can tell you an overview of a topic and help provide you with sources to start your research paper, but Wikipedia itself isn’t a good source.