- cross-posted to:

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- cross-posted to:

- [email protected]

- [email protected]

- [email protected]

- [email protected]

https://fosstodon.org/@fedora/110821025948014034

TL;DR: Asahi Linux will be developed with the Fedora Linux distribution as the primary distribution moving forward. Fedora’s discourse forum will be the primary place to discuss Asahi Linux.

I keep seeing Docker being mentioned everywhere, but I can’t seem to figure out what it is.

maybe I’m just dumb or my Google Fu isn’t as good as I thought, but can you offer an explanation? is it just virtualization software?

If I understood correctly, Docker is a software to maintain containers. Containers are ready to go images that can run on top of your base os, like virtualisation but in a more direct way, for exemple by sharing the kernel with the os, making it lighter and way more efficient than full virtualisation

So like an App Store for programmers…?

No, not really. Containers are sort of like tiny virtual machines that run one program. Under the hood, it’s different from that, but for simplicity’s sake, let’s just leave it at that. With this analogy, Docker would be like VMWare’s software. You can use it to start and stop containers, see what containers are running, run a shell on them, etc. Docker provides the infrastructure for containers to communicate with each other, the host OS and mounted storage volumes. The one feature it has that is sort of like an app store is that it can be used to pull container images. But you need to provide the URI for that yourself. You can’t just browse images as far as I know, which I think would be an integral feature of an app store. Docker Hub is a web page that allows you to browse images, and you can copy the URI from there into your CLI to have Docker pull the image.

deleted by creator

It’s lighter than a VM but a bit heavier than aiming to run an application natively (and all the dependency & configuration hell that entails).

Basically a convenient way to package and run applications with all their dependencies, without regard for what libraries & configurations exist in the host OS and other containers.

If your application only works with up to version 42 of the Whatchamacallit library, you ship it with that version of Whatchamacallit, the underlying OS doesn’t need to install it. Other containers running on the system that depend on that library don’t get broken since they’re packaged with version 69 which works fine for them.

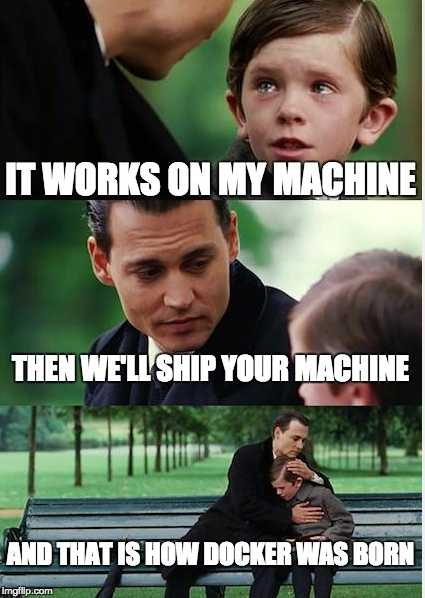

Meme answer:

Apparently not cause it’s super easy to find. Searching “docker” on Google returned it as the top result for me. it’s a container platform. You have code and it needs somewhere to run. That could be on your computer but that’s ineffective at handling package conflicts. So you run it in a container. This means you can install the specific versions of dependencies that the code needs and you’re least likely to run into conflicts. You can also run multiple instances of a program regardless of whether it would allow it because each instance runs in its own container. Blissfully unaware of the others

Docker isn’t virtualization. It’s a way of packaging applications, their dependencies and configuration. Docker containers can be run together or segregated based on configuration. Essential in much modern software- no more this dependency for x clashes with that dependency for y / ‘works on my machine’ / I can’t install that version.

The containers share a host Linux kernel (which is virtualized on non Linux systems). Docker runs fine on ARM but only using arm containers. It’s tricky to run x86_64 containers on an arm host, especially with a different OS

X86_64 containers run just fine on my m2 Mac. Since macOS doesn’t really support containers, it’s really just running a vm to run the containers in. Changing the vm allows you to run a different arch. Rosetta makes that fairly easy. I use Colima to run my containers, and to make them run as x86_64 it’s just a command line flag

Here’s the difference between virtualisation and containerisation:

virtualisation –> virtualise / emulate an entire machine (including hardware). Meaning you’re running a second virtual computer (called a guest) within your own computer (called the host)

containerisation –> cordon off parts of your system for a group of processes aka contain them to parts of your system.

Imagine if you’re in a factory and you have a group of workers that handle generic tasks (CPU) and another one graphical tasks (GPU), a storage room (RAM), and an operator (the operating system)

Virtualisation is the equivalent of taking some generic workers, letting them build a separate factory within the existing factory, and act like another factory. They may even know how to translate instructions from the host factory to instructions understood only in the guest factory. They also occupy a part of the storage room. And to top it off they of course have their own operator that communicates with the host operator before doing virtually anything.

Containerisation is the equivalent of the operator starting processes that either do not know how large the storage room, generic worker pool, nor graphical worker pool are, or only having access to a section of the aforementioned. Basically contains them in their own view of the world with very little overhead. No new factory, no new operator, no generic workers that behave like graphical workers or can only understand certain instructions.

Distribution

In terms of distribution, virtualisation is like passing around mini-factories to other factories (or optimally descriptions of the factories needed to execute the instructions within the file). Containers are really a bunch of compressed directories, with some meta information about which process should be started first (amongst other things) and that processes and its subprocesses having a limited view of their world.

On Mac

Containerisation was popularised on linux (even though BSD had it first IIRC), which is where the operating system primitives and concepts were made to enable what we now know as Docker. Since virtually all containers in existence these days require linux due to how they are created and the binaries they contain, running docker (or anything that supports containers) requires virtualising a linux machine within which containers run.

This comes with its own hurdles and of course is slower than on linux.