I don’t unless a website requires that I talk to one as a poor excuse for customer service.

So, less than once a year.

I just type “Speak to a human” until it relents. Usually takes 3-4 times. Kind of the chatbot equivalent of mashing 0 on telephone IVRs. The only question of its that I answer, after it agrees to get a human, is when it asks what I need support with since that gets forwarded to the tech.

About as often as I have a conversation with my dishwasher: never.

Jeez…how do you think your dishwasher feels about that? Monster!!

Never

If by conversation you mean asking for a word by describing it conceptually because I can’t remember, every day. If you mean telling it about my day and hobbies, never.

That is basically the best use of LLMs.

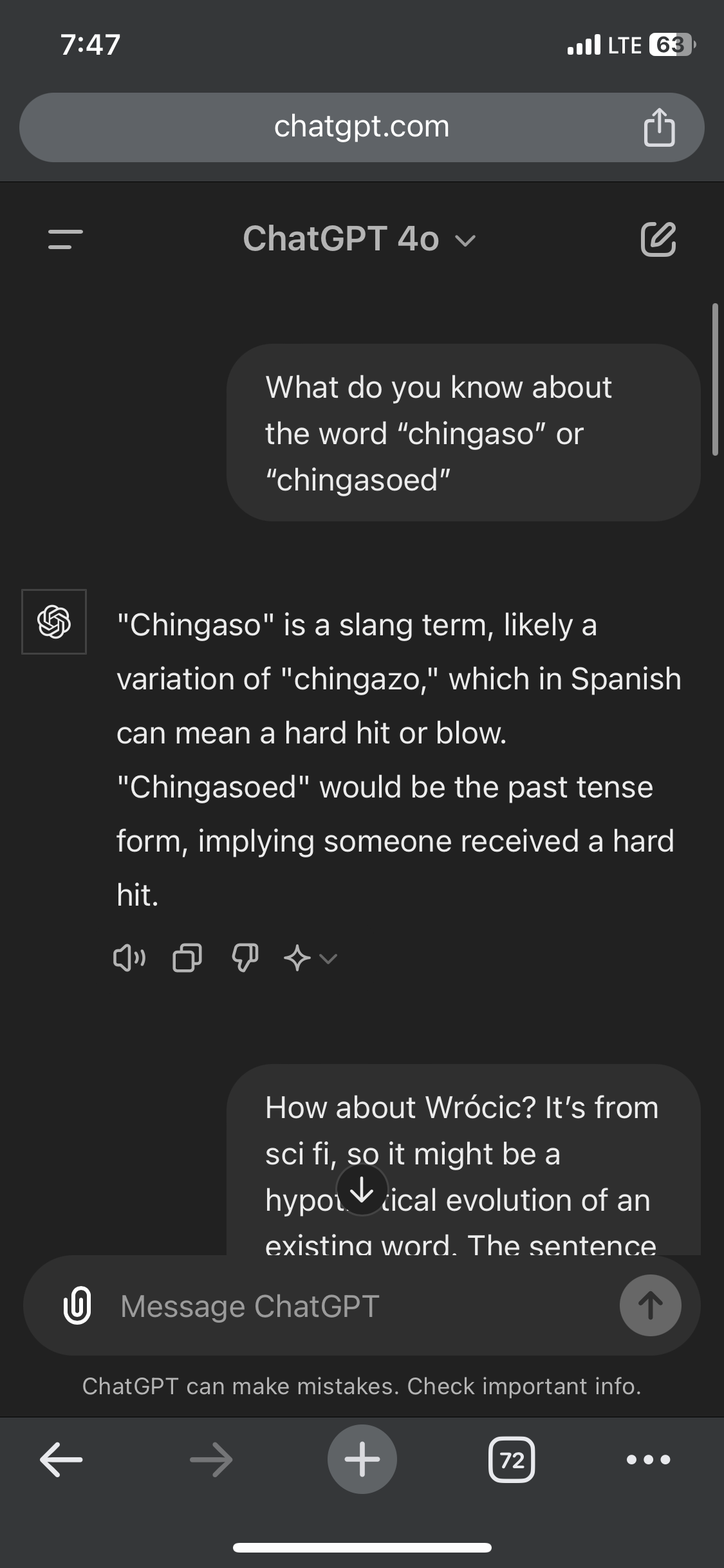

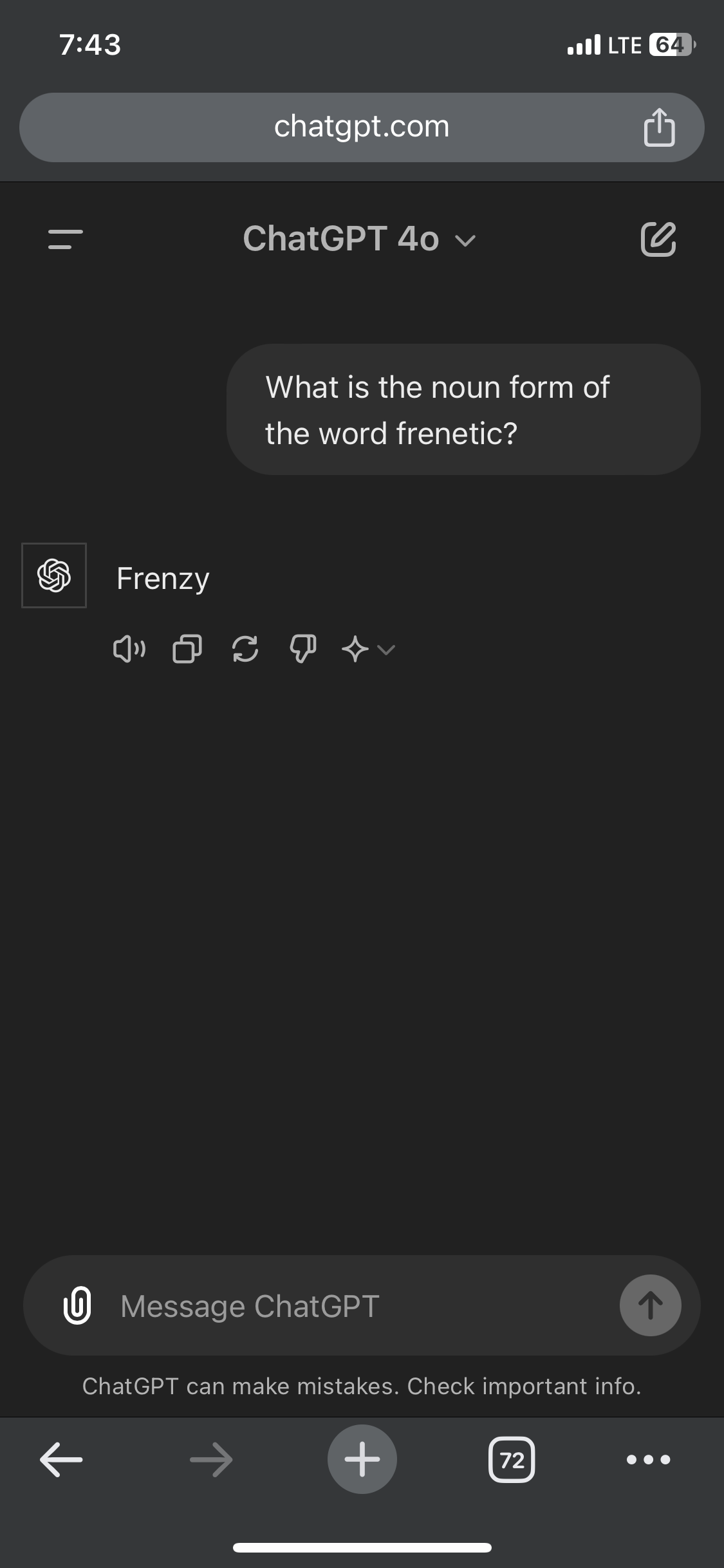

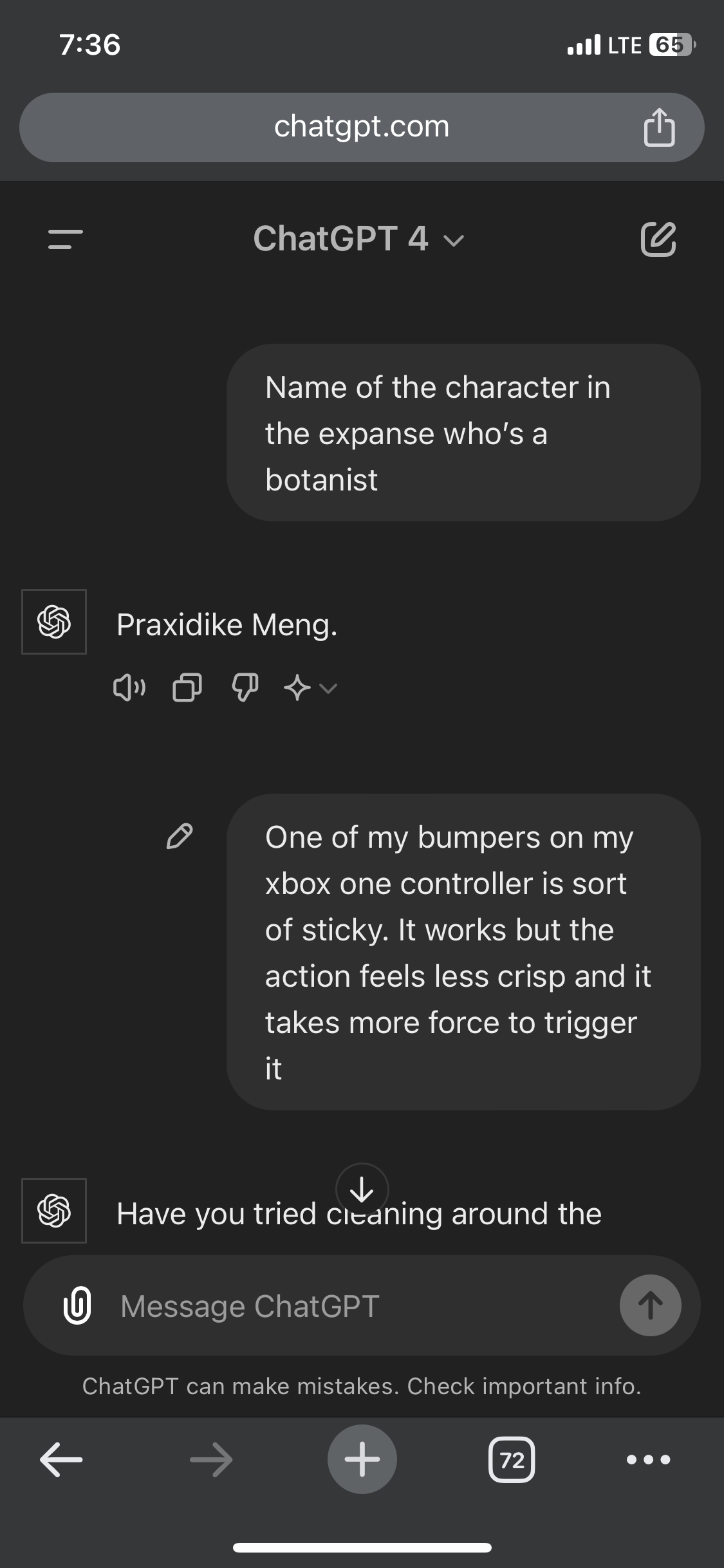

A few of the most useful conversations I’ve had with ChatGPT:

I had fun with it a dozen times or so when it was new, but I’m not amused anymore. Last time was about a month ago, when someone told me about using chatGPT to seek an answer, and I intentionally found a few prompts that made it spill clear bullshit, to send screenshots making a point that LLMs aren’t reliable and asking them factual questions is a bad idea.

asking them factual questions is a bad idea

This is a crucial point that everybody should make sure their non-techie friends understand. AI is not good at facts. AI is primarily a bullshitter. They are really only useful where facts don’t matter, like planning events, finding ways to spend time, creating art, etc…

If you’re prepared to fact check what it gives you, it can still be a pretty useful tool for breaking down unfamiliar things or for brainstorming. And I’m saying that as someone with a very realistic/concerned view about its limitations.

Used it earlier this week as a jumping off point for troubleshooting a problem I was having with the USMT in Windows 11.

Absolutely. With code (and I suppose it’s of other logical truths) it’s easier because you can ask it to write a test for the code, though the test may be invalid so you have to check that. With arbitrary facts, I usually ask “is that true?” To have it check itself. Sometimes it just gets into a loop of lies, but other times it actually does tell the truth.

Never

I’ve done it once or twice in the early days to see what was up, never since then.

deleted by creator

Your lack of faith is disturbing.

Don’t be too proud of this technological terror you’ve created. The ability to compose haiku is insignificant next to the power of a nice hug.

Lol somebody downvoted you. I love a good hug

All too easy

It’s simple: I don’t.

Maybe 1-3 times a day. I find that the newest version of ChatGPT (4o) typically returns answers that are faster and better quality than a search engine inquiry, especially for inquiries that have a bit more conceptualization required or are more bespoke (i.e give me recipes to use up these 3 ingredients etc) so it has replaced search engines for me in those cases.

I forgot how the conversation went, but one day, a conversation I had with someone about comprehensibility (which was often an issue) compelled me to talk to an AI, a talk which I remember from the fact the AI did now have such issues as the complaining humans had.

Yeah I’ve run into this a bit. People say it “doesn’t understand” things, but when I ask for a definition of “understand” I usually just get downvotes.

The closest I come to chatting is asking github co-pilot to explain syntax when I’m learning a new language. I just needed to contribute a class library to an existing C# API, hadn’t done OOP in 15 years, and had never touched dotNet.

Not as much as I did at the beginning, but I mainly chalk that up to learning more about its limitations and getting better at detecting its bullshit. I no longer go to it for designing because it doesn’t do it well at the scale i need. Now it’s mainly used to refractor already working code, to remember what a kind of feature is called, and to catch random bugs that usually end up being typos that are hard to see visually. Past that, i only use it for code generation a line at a time with copilot, or sometimes a function at a time if the function is super simple but tedious to type, and even then i only accept the suggestion that i was already thinking of typing.

Basically it’s become fancy autocomplete, but that’s still saved me a tremendous amount of time.

Once or twice a week

Maybe 3-4 times a year. Can’t see using it more than that at this point.