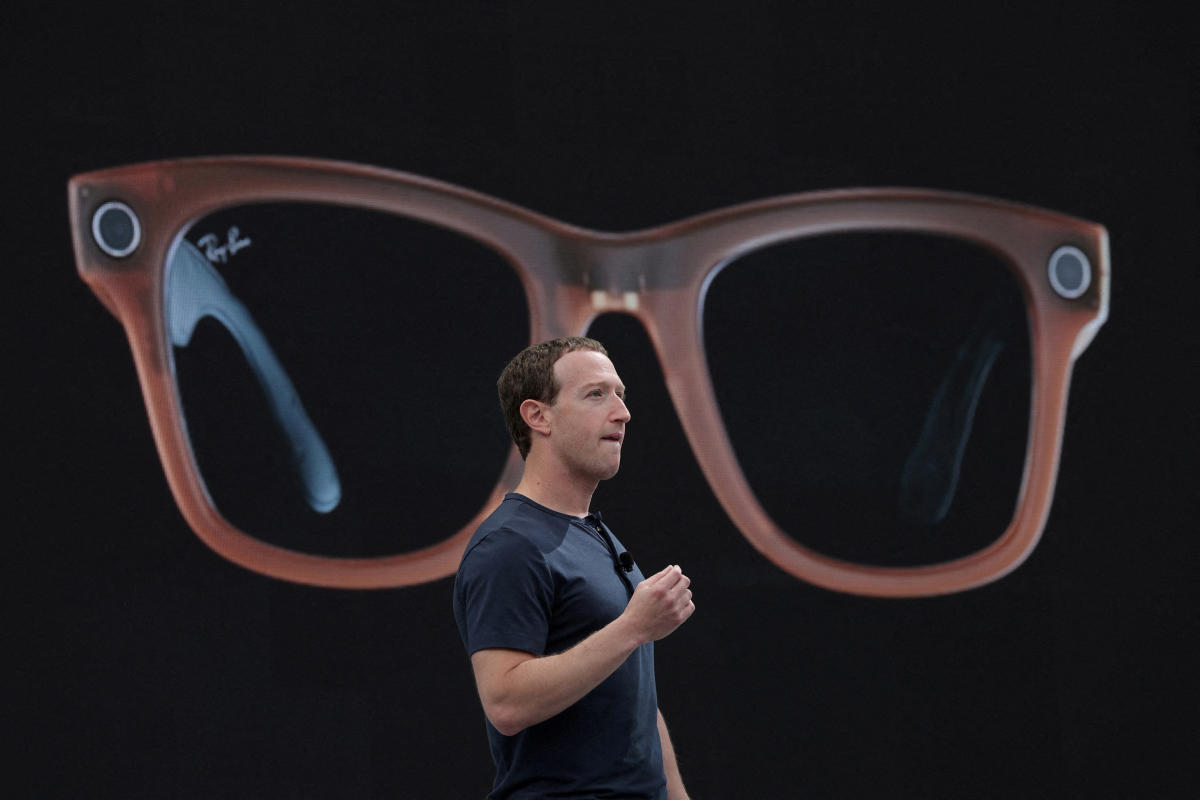

Meta on Tuesday announced the release of Llama 3.1, the latest version of its large language model that the company claims now rivals competitors from OpenAI and Anthropic. The new model comes just three months after Meta launched Llama 3 by integrating it into Meta AI, a chatbot that now lives in Facebook, Messenger, Instagram and WhatsApp and also powers the company’s smart glasses. In the interim, OpenAI and Anthropic already released new versions of their own AI models, a sign that Silicon Valley’s AI arms race isn’t slowing down any time soon.

Meta said that the new model, called Llama 3.1 405B, is the first openly available model that can compete against rivals in general knowledge, math skills and translating across multiple languages. The model was trained on more than 16,000 NVIDIA H100 GPUs, currently the fastest available chips that cost roughly $25,000 each, and can beat rivals on over 150 benchmarks, Meta claimed.

IMO the more interesting models are 70B and 8B, aka the first models you can host yourself and (for basically the first time) the first open models distilled from such a large “parent” model.

But the release is a total dud among testers because they’re bugged with llama.cpp, lol.

I’ve got llama 3.1 8b running locally in open webui. What do you mean it’s bugged with llama.cpp?

llama.cpp, the underlying engine, doesn’t support extended RoPE yet. Basically this means long context doesnt work and short context could be messed up too.

I am also hearing rumblings of a messed up chat template?

Basically with any LLM in any UI that uses a GGUF, you have to be very careful of bugs you wouldn’t get in the huggingface-based backends. A lot of models run without errors, but not quite right.

I wouldn’t call it a “dud” on that basis. Lots of models come out with lagging support on the various inference engines, it’s a fast-movibg field.

Yeah, but it leaves a bad initial impression when all the frontends ship it and the users aren’t aware its bugged.

Does anyone know what it takes to run 70b?

Seems like min 32gb RAM and 4070?

I mean I have a 24GB GPU, and its almost too slow for me. If someone makes an AQLM I may run it some.

You were able to load 70b just into GPU?

Yeah, an AQLM 70B will fit in 24GB with very short context, but decent quality.

You never hear about it, mostly because it’s so hard to quantize in the first place, but also because it’s not a GGUF so most people ignore the format, lol.

Willing to bet Zuck enjoys Jarvis. Bet there is also a ridiculous back and forth over this with Musk and Altmann.

Any of the 3 could solve American homelessness over night, but build Hawaiian preper bunker islands instead.

At least Zuck is only trying to lead and not monopolize.

I do not understand the goal of Llama. Is facebook trying to make their model so small it will run on a phone?

There are many, but one strategic goal is to “poison the well” for OpenAI.

OpenAI is trying to lobby for regulations that let them monopolize AI, so they are essentially the only ones that can sell it, and instead of playing this game Facebook is seeding public research so they can keep up with closed LLMs and make better cases for themselves. Which benefits them, as then they aren’t a customer of OpenAI or Google.

Another is to attract talent themselves. AI researchers love all this.

Yet another is to set the standard. The llama architecture is THE open LLM architecture because of facebook, and you run into major problems trying to run anything else, fast. Its also fostered a lot of innovations they wouldn’t have come up themselves, which they can turn around and deploy for free.

And they have a bit of a moat because hosting llama 400B is freaking expensive, and they have a ton of GPUs to do it with.

I duckin love when the capitalist incentives work out for benefiting everyone

I’m not sure what entities, motivations, qualifications, connections underpin Lex Fridman with his podcasts/YT channel, but he has interviewed many people in AI including Zuckerberg, Altmann, and Musk. His interviews with Yann LeCunn are quite interesting. Professor LeCunn is the head of Meta AI. His longer interviews are much better in total for showing the lay of the land overview perspective. Some little clip does not do justice to the overall points taken in context, but telling you to go watch an hour long interview to get the answer directly does not work either.

https://www.youtube.com/watch?v=fshIOoTo40E

This is a 4min clip of LeCunn saying, basically it doesn’t hurt anyone. He’s essentially implying it will hurt OpenAI or any proprietary.

I was trying to find the interview where Lex and Yann talk about the leaked Google memo last year, because that one is really good, but YT seems to be obfuscating that one intentionally in search results.

IIRC, in that one, LeCunn was saying something to the effect of the only way people can really trust AI is with transparency and that requires open source as a foundation. Using something like OpenAI in business is insane. You’re basically selling every aspect of your company to Altmann for peanuts. Likewise with personal use, this is like your life long psychiatrist opening a few side businesses as a political analyst, insurance broker, banker, and healthcare insurance provider, while working nights as a Judge. While you’re asked to sign away any privacy or confidentiality. Models turn human language and culture into a statistical math problem that has far better than 50% probabilities in nearly any aspect of human existence. If you ask a model to give a profile for Name-1, it will tell you all kinds of seemingly unrelated things about the person. The more you interact, the more accurate this profile becomes, even in areas that make no sense, have no logical association, and were never a part of the conversation. It is the key to manipulating people unlike any other tool in history. That is why open source offline AI is the only sensible way to use AI.

You’re basically selling every aspect of your company to Altmann for peanuts.

Technically you are given that clown your money and data lol

At least Zuck is only trying to lead and not monopolize.

I think he would, if he thought he could

If you can’t monopolize, the next best thing is to make sure nobody else can.

Which is actually a pretty good thing.

That’s what bacm in my day was called Competition!!!

Our oligarchs figured that collusion and cartels work better for them tho.

It ain’t grand how they can unionize while avg American worker hates his labour?

I’m glad they named it after an animal known for its spitting. That’s what so-called AI does.

What about the battle for enormous-mound-of-horseshit dominance?

They haven’t announced any debate schedule yet.

they’re all winners on that

Llama3.1 33b would be so cool. It would be a nice middle ground for my machine.

What a waste of energy.

Innovation has stopped, not improved. BIIIIIIGA DATA is not innovation.

All for word predicting chat bots. What a waste.

At least you can (theoretically, if you have your own datacentre or botnet) run, finetune and play with this yourself, so at least it’s somewhat useful, especially if you finetune it for applications where word predicting is actually exactly what you want