- cross-posted to:

- [email protected]

- cross-posted to:

- [email protected]

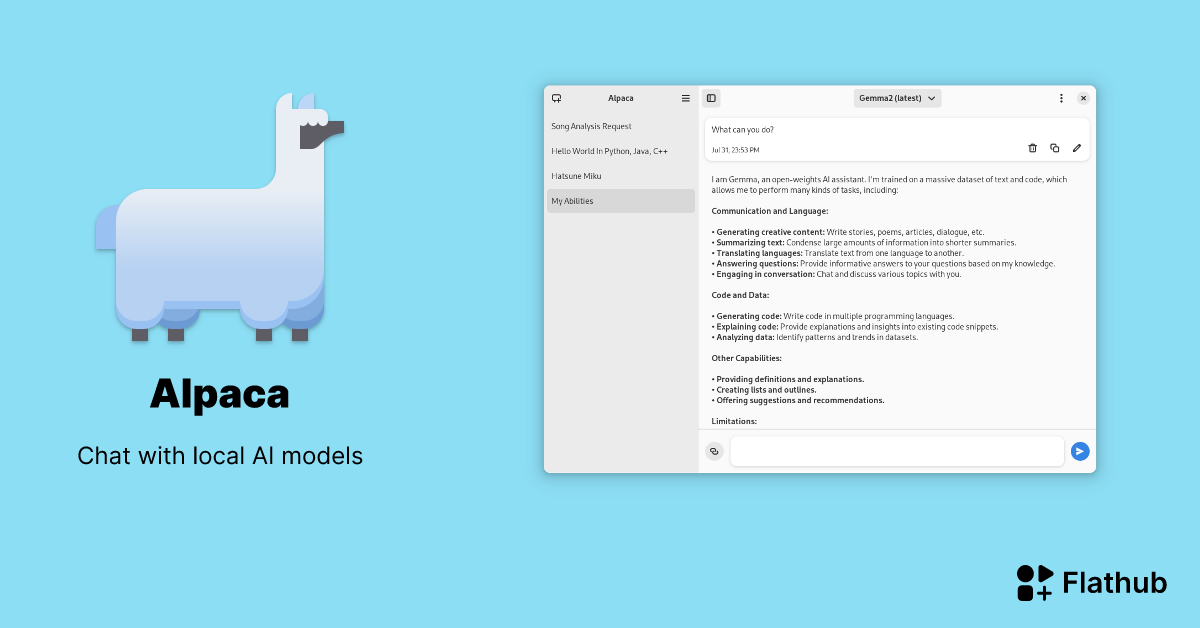

I’m not a huge fan of AI, but I do use it occasionally. I found a tool on Flathub that I’ve been trying out, so I thought i would share it if any for you need something like it. The interface is also pretty nice if you use Gnome(it don’t look so nice KDE). Its unstable on my arch install

I have used Alpaca in the past, but personally I prefer GPT4ALL as it seems to be more complete.

Any particular reason you prefer GPT4ALL?

Way more models available, faster in my experience, more reliable, local ChatGPT compatible api and advanced fine tuning features. There have been some additions to Alpaca since I last used it, so maybe I will try it again soon but since I don’t use it regularly I use GPT4ALL because it just works, and when I tried Alpaca didn’t.

I’d say alpaca is pretty much identical these days, the only major difference is the interface. If you need more power running something like Open WebUI with Ollama makes more sense.

There’s also https://lmstudio.ai/

But lmstudio isn’t FOSS no?

I recently did a very simple PR for this, adding keywords so I don’t keep forgetting the name of the app and not being able to find it 😅

Nice! It can also connect to a remote instance of ollama 👍

something tells me its not local

I think something is lying to you.

local AI models are a thing you know, just run this on a computer with no internet access and you’ll swiftly see whether it relies on servers or not.

so like, turn your network connection off and try it

Why would that be the case?

Computation and storage limitations

You overestimate the hardware required to run AI.

I can run Llama3 on my desktop with a 3060, answers are near instant.

model training is often exorbitantly high-energy and storage, but the model output is not: GPT3 cost $7 million dollars just in electricity costs to train, but the model is “only” 500 GB, and can be run locally on just CPU processing

cool

You can run a basic model on pretty mid-range hardware, the smaller ones are only 1-2GB in size.

To be fair, the first time I tried running local AI (and it actually worked), I was so surprised that I actually unplugged my Ethernet and tried again. I’m still surprised, but it’s possible for the massive amounts of training data to be compressed to a model under only 10 or 20 GB.

Username checks out

Just use firejail to sandbox it and find out.

It absolutely is. I even tested it with WiFi turned off.