Day 9: Disk Fragmenter

Megathread guidelines

- Keep top level comments as only solutions, if you want to say something other than a solution put it in a new post. (replies to comments can be whatever)

- You can send code in code blocks by using three backticks, the code, and then three backticks or use something such as https://topaz.github.io/paste/ if you prefer sending it through a URL

FAQ

- What is this?: Here is a post with a large amount of details: https://programming.dev/post/6637268

- Where do I participate?: https://adventofcode.com/

- Is there a leaderboard for the community?: We have a programming.dev leaderboard with the info on how to join in this post: https://programming.dev/post/6631465

Haskell

This was fun, I optimized away quite a bit, as a result it now runs in 0.04s for both parts together on my 2016 laptop.

In part 1 I just run through the array with a start- and an end-index whilst summing up the checksum the entire time.

In part 2 I build up Binary Trees of Free Space which allow me to efficiently search for and insert free spaces when I start traversing the disk from the back. Marking the moved files as free is omitted because the checksum is calculated for every file that is moved or not moved directly.Code

import Control.Monad import Data.Bifunctor import Control.Arrow hiding (first, second) import Data.Map (Map) import Data.Set (Set) import Data.Array.Unboxed (UArray) import qualified Data.Map as Map import qualified Data.Set as Set import qualified Data.Ord as Ord import qualified Data.List as List import qualified Data.Char as Char import qualified Data.Maybe as Maybe import qualified Data.Array.Unboxed as UArray toNumber = flip (-) (Char.ord '0') <<< Char.ord type FileID = Int type FileLength = Int type DiskPosition = Int type File = (FileID, (DiskPosition, FileLength)) type EmptyMap = Map FileLength (Set DiskPosition) readDisk :: DiskPosition -> [(Bool, FileLength)] -> [(Bool, (DiskPosition, FileLength))] readDisk _ [] = [] readDisk o ((True, l):fs) = (True, (o, l)) : readDisk (o+l) fs readDisk o ((False, l):fs) = (False, (o, l)) : readDisk (o+l) fs parse2 :: String -> ([File], EmptyMap) parse2 s = takeWhile (/= '\n') >>> map toNumber >>> zip (cycle [True, False]) -- True is File, False is empty >>> readDisk 0 >>> List.partition fst >>> join bimap (map snd) >>> first (zip [0..]) >>> first List.reverse >>> second (filter (snd >>> (/= 0))) >>> second (List.sortOn snd) >>> second (List.groupBy (curry $ (snd *** snd) >>> uncurry (==))) >>> second (List.map (snd . head &&& map fst)) >>> second (List.map (second Set.fromDistinctAscList)) >>> second Map.fromDistinctAscList $ s maybeMinimumBy :: (a -> a -> Ordering) -> [a] -> Maybe a maybeMinimumBy _ [] = Nothing maybeMinimumBy f as = Just $ List.minimumBy f as fileChecksum fid fpos flen = fid * (fpos * flen + ((flen-1) * (flen-1) + (flen-1)) `div` 2) type Checksum = Int moveFilesAccumulate :: (Checksum, EmptyMap) -> File -> (Checksum, EmptyMap) moveFilesAccumulate (check, spaces) (fid, (fpos, flen)) = do let bestFit = Map.map (Set.minView) >>> Map.toList >>> List.filter (fst >>> (>= flen)) >>> List.filter (snd >>> Maybe.isJust) >>> List.map (second Maybe.fromJust) -- [(FileLength, (DiskPosition, Set DiskPosition))] >>> List.filter (snd >>> fst >>> (< fpos)) >>> maybeMinimumBy (\ (_, (p, _)) (_, (p', _)) -> Ord.compare p p') $ spaces case bestFit of Nothing -> (check + fileChecksum fid fpos flen, spaces) Just (spaceLength, (spacePosition, remainingSet)) -> do -- remove the old empty entry by replacing the set let updatedMap = Map.update (const $! Just remainingSet) spaceLength spaces -- add the remaining space, if any let remainingSpace = spaceLength - flen let remainingSpacePosition = spacePosition + flen let updatedMap' = if remainingSpace == 0 then updatedMap else Map.insertWith (Set.union) remainingSpace (Set.singleton remainingSpacePosition) updatedMap (check + fileChecksum fid spacePosition flen, updatedMap') parse1 :: String -> UArray Int Int parse1 s = UArray.listArray (0, sum lengthsOnly - 1) blocks where lengthsOnly = filter (/= '\n') >>> map toNumber $ s :: [Int] blocks = zip [0..] >>> List.concatMap (\ (index, n) -> if index `mod` 2 == 0 then replicate n (index `div` 2) else replicate n (-1)) $ lengthsOnly :: [Int] moveBlocksAccumulate :: Int -> Int -> UArray Int Int -> Int moveBlocksAccumulate start stop array | start == stop = if startBlock == -1 then 0 else start * startBlock | start > stop = 0 | stopBlock == -1 = moveBlocksAccumulate start (stop - 1) array | startBlock == -1 = movedChecksum + moveBlocksAccumulate (start + 1) (stop - 1) array | startBlock /= -1 = startChecksum + moveBlocksAccumulate (start + 1) stop array where startBlock = array UArray.! start stopBlock = array UArray.! stop movedChecksum = stopBlock * start startChecksum = startBlock * start part1 a = moveBlocksAccumulate 0 arrayLength a where (_, arrayLength) = UArray.bounds a part2 (files, spaces) = foldl moveFilesAccumulate (0, spaces) >>> fst $ files main = getContents >>= print . (part1 . parse1 &&& part2 . parse2)Uiua

Just a port of my Dart solution from earlier really, and it shows, as it takes about 30 seconds on the live data.

(edit: I just noticed the little alien in the code

(⋅⋅∘|⋅∘|∘)which is literally flipping the stack (╯°□°)╯︵ ┻━┻!)Data ← "2333133121414131402" FS ← ↙⌊÷2⧻.▽≡⋕:♭⍉⊟⊃(⇡|↯:¯1)⧻.Data # Build up a map of the FS. MoveB ← ⍜(⊡|⋅)⊃(⋅⋅∘|⋅∘|∘) ⊡¯1.:⊢⊚⌕¯1. # Find a space, move block into it. MoveBs ← ⍢(⍢(↘¯1|=¯1⊣)↘¯1MoveB|>0⧻⊚⌕¯1) TryMove ← ⨬(◌|∧⍜⊏⇌⍉)/×/>. MoveFile ← ( ⊃(⊚⌕↯:¯1⧻|∘)⊚⌕⊙.⊙. # get posns from, start posn to. ⨬(◌◌|TryMove ⊟+⊙◌°⊏,⊢)>0⧻. # check posn to is good, swap. ) Check ← /+/×⊟⇡⧻.↥0 &p Check MoveBs FS &p Check ∧MoveFile⇌+1⇡/↥.FS(edit: improved. Part1 is instant, part2 is about 17sec, but the alien has left)

Data ← "2333133121414131402" FS ← ▽≡⋕:↙⧻:♭⍉⊟⊃(⇡|↯:¯1)⧻..Data # Build up a map of the FS. Ixs ← ⊃(⊚¬|⇌⊚)≥0 # Get indices of space, and of blocks reversed. SwapBs ← ▽⊸≡/>⍉⊟∩↙⟜:↧∩⧻,, # Join them where space < block. Files ← ⇌≡(□⊚)⊞=⇡+1/↥. Move ← ∧(⍜⊏⇌)⍉⊟+⇡⧻,⊢ # (tos, froms, fs) MoveFile ← ( ⊚⌕⊙,↯:¯1⧻. # List of possible starts ⨬(◌◌|⨬(◌◌|Move)>∩⊢,,)>0⧻. # Only valid, leftwards starts ) Check ← /+/×⊟⇡⧻.↥0 &p Check ∧⍜⊏⇌SwapBs⊸Ixs FS &p Check ∧◇MoveFile Files .FSHaskell

Quite messy

{-# LANGUAGE LambdaCase #-} module Main where import Control.Applicative import Control.Arrow import Control.Monad import Control.Monad.ST import Control.Monad.Trans import Control.Monad.Trans.Maybe import Data.Array.ST import Data.Array.Unboxed import Data.Char import Data.List import Data.Maybe parse = zip ids . fmap digitToInt . takeWhile (/= '\n') ids = intersperse Nothing $ Just <$> [0 ..] expand :: [(a, Int)] -> [a] expand = foldMap (uncurry $ flip replicate) process l = runSTArray $ do arr <- newListArray (1, length l) l getBounds arr >>= uncurry (go arr) where go arr iL iR = do (iL', iR') <- advance arr (iL, iR) if iL' < iR' then swap arr iL' iR' *> go arr iL' iR' else return arr swap arr i j = do a <- readArray arr i readArray arr j >>= writeArray arr i writeArray arr j a advance arr (h, t) = (,) <$> advanceHead arr h <*> advanceTail arr t where advanceHead arr i = readArray arr i >>= \case Nothing -> return i _ -> advanceHead arr (succ i) advanceTail arr i = readArray arr i >>= \case Nothing -> advanceTail arr (pred i) _ -> return i checksum = sum . zipWith (*) [0 ..] process2 l = runSTArray $ do let idxs = scanl' (+) 1 $ snd <$> l iR = last idxs arr <- newArray (1, iR) Nothing forM_ (zip idxs l) $ \(i, v) -> writeArray arr i (Just v) runMaybeT $ go arr iR return arr where go :: MArr s -> Int -> MaybeT (ST s) () go arr iR = do (i, sz) <- findVal arr iR (findGap arr sz 1 >>= move arr i) <|> return () go arr $ pred i type MArr s = STArray s Int (Maybe (Maybe Int, Int)) findGap :: MArr s -> Int -> Int -> MaybeT (ST s) Int findGap arr n i = do mx <- lift $ snd <$> getBounds arr guard $ i <= mx ( do Just (Nothing, v) <- lift (readArray arr i) guard $ v >= n hoistMaybe $ Just i ) <|> findGap arr n (succ i) findVal :: MArr s -> Int -> MaybeT (ST s) (Int, Int) findVal arr i = do guard $ i >= 1 lift (readArray arr i) >>= \case Just (Just _, sz) -> hoistMaybe $ Just (i, sz) _ -> findVal arr $ pred i move arr iVal iGap = do guard $ iGap < iVal Just (Nothing, gap) <- lift $ readArray arr iGap v@(Just (Just _, sz)) <- lift $ readArray arr iVal lift . writeArray arr iVal $ Just (Nothing, sz) lift $ writeArray arr iGap v when (gap > sz) . lift . writeArray arr (iGap + sz) $ Just (Nothing, gap - sz) part1 = checksum . catMaybes . elems . process . expand part2 = checksum . fmap (fromMaybe 0) . expand . catMaybes . elems . process2 main = getContents >>= print . (part1 &&& part2) . parsePYTHON

Execution Time: Part1 = 0.02 seconds. Part2 = ~2.1 seconds. total = ~2.1 seconds

Aiming for simplicity over speed. This is pretty fast for not employing simple tricks like trees and all that.

code

because of text limit and this code being slow, I put it in a topaz paste: [ link ]

Edit:

New version that is using a dictionary to keep track of the next empty slot that fits the current index.

Execution Time: Part1 = 0.02 seconds. Part2 = ~0.08 seconds. total = ~0.08 seconds 80 ms

code

you can also find this code in the Topaz link: [ link ]

Edit: final revision. I just realized that the calculating for “last_consecutive_full_partition” was not necessary and very slow. if I know all the next available slots, and can end early once my current index dips below all next available slots then the last_consecutive_full_partition will never be reached. This drops the time now to less than ~0.1 seconds

Probably Final Edit: I found someone’s O(n) code for OCaml. I tried to convert it to be faith fully in pure python. seems to work really really fast. 30-50 ms time for most inputs. seems to scale linearly too

FastCode

def int_of_char(x): return ord(x) - ord('0') # Represent content as tuples: # ('Empty', size) or ('File', id, size) def parse(line): arr = [] for i in range(len(line)): c = int_of_char(line[i]) if i % 2 == 0: arr.append(('File', i // 2, c)) else: arr.append(('Empty', c)) return arr def int_sum(low, high): return (high - low + 1) * (high + low) // 2 def size(elem): t = elem[0] if t == 'Empty': return elem[1] else: return elem[2] def part1(array): total = 0 left = 0 pos = 0 right = len(array) - 1 while left < right: if array[left][0] == 'File': # File _, fid, fsize = array[left] total += fid * int_sum(pos, pos + fsize - 1) pos += fsize left += 1 else: # Empty _, esize = array[left] if array[right][0] == 'Empty': right -= 1 else: # Right is File _, fid, fsize = array[right] if esize >= fsize: array[left] = ('Empty', esize - fsize) total += fid * int_sum(pos, pos + fsize - 1) pos += fsize right -= 1 else: array[right] = ('File', fid, fsize - esize) total += fid * int_sum(pos, pos + esize - 1) pos += esize left += 1 # If one element remains (left == right) if left == right and left < len(array): if array[left][0] == 'File': _, fid, fsize = array[left] total += fid * int_sum(pos, pos + fsize - 1) return total def positions(arr): total = 0 res = [] for e in arr: res.append(total) total += size(e) return res def array_fold_right_i(f, arr, acc): pos = len(arr) - 1 for elt in reversed(arr): acc = f(elt, pos, acc) pos -= 1 return acc def part2(array): def find_empty(size_needed, max_pos, pos): while pos <= max_pos: if array[pos][0] == 'File': raise Exception("Unexpected: only empty at odd positions") # Empty _, esize = array[pos] if esize >= size_needed: array[pos] = ('Empty', esize - size_needed) return pos pos += 2 return None emptys = [1 if i < 10 else None for i in range(10)] pos_arr = positions(array) def fold_fun(elt, i, total): if elt[0] == 'Empty': return total # File _, fid, fsize = elt init_pos = emptys[fsize] if init_pos is None: new_pos = pos_arr[i] else: opt = find_empty(fsize, i, init_pos) if opt is None: new_pos = pos_arr[i] else: new_pos = pos_arr[opt] pos_arr[opt] += fsize emptys[fsize] = opt return total + fid * int_sum(new_pos, new_pos + fsize - 1) return array_fold_right_i(fold_fun, array, 0) def main(): with open('largest_test', 'r') as f: line = f.read().replace('\r', '').replace('\n', '') arr = parse(line) arr_copy = arr[:] p1 = part1(arr_copy) print("Part 1 :", p1) p2 = part2(arr) print("Part 2 :", p2) if __name__ == "__main__": main()So cool, I was very hyped when I managed to squeeze out the last bit of performance, hope you are too. Especially surprised you managed it with python, even without the simple tricks like trees ;)

I wanted to try it myself, can confirm it runs in under 0.1s in performance mode on my laptop, I am amazed though I must admin I don’t understand your newest revision. 🙈

Just to let you know, I posted the fastest python version I could come up with. Which took heavy inspiration from [ link to github ]

supposedly O(n) linear time, and does seem to work really fast.

Thanks! your Haskell solution is extremely fast and I don’t understand your solution, too. 🙈 lol

My latest revision just keeps a dict with lists of known empty slots with the length being the dict key, including partially filled slots. I iteratively find the slot that has the lowest index number and make sure the lists are properly ordered from lowest to highest index number.

looking at the challenge example/description, it shows a first pass only type of “fragmenting”. we can be confident that if something did not fit, it can just stay in the same spot even if another slot frees up enough space for it to fit. so just checking if current index is lower than the lowest index number of any of the slot lengths would just be enough to stop early. That is why I got rid of

last_consecutive_full_partitionbecause it was slowing it down by up to 2 seconds.in example, even if

5555,6666, or8888can fit in the new spot created by moving44, they are staying put. Thus a first pass only sort from back to front.00...111...2...333.44.5555.6666.777.888899 0099.111...2...333.44.5555.6666.777.8888.. 0099.1117772...333.44.5555.6666.....8888.. 0099.111777244.333....5555.6666.....8888.. 00992111777.44.333....5555.6666.....8888..I only now found your edit after I had finished my previous comment. I think splitting into two lists may be good: one List of Files and one of Empty Blocks, I think this may not work with your checksumming so maybe not.

Thank you for the detailed explanation!, it made me realize that our solutions are very similar. Instead of keeping a

Dict[Int, List[Int]]where the value list is ordered I have aDict[Int, Tree[Int]]which allows for easy (and fast!) lookup due to the nature of trees. (Also lists in haskell are horrible to mutate)I also apply the your technique of only processing each file once, instead of calculating the checksum afterwards on the entire list of file blocks I calculate it all the time whenever I process a file. Using some maths I managed to reduce the sum to a constant expression.

yeah, I was a bit exhausted thinking in a high level abstract way. I do think that if I do the checksum at the same time I could shave off a few more milliseconds. though it is at like the limits of speed, especially for python with limited data types(no trees lol). Decently fast enough for me :)

edit: I also just tested it and splitting into two lists gave no decent speed up and made it slower. really iterating backwards is fast with that list slice. I can’t think of another way to speed it up past it can do rn

Thank you for trying, oh well. Maybe we are simply at the limits.

so if I look at each part of my code. the first 4 lines will take 20 ms

input_data = input_data.replace('\r', '').replace('\n', '') part2_data = [[i//2 for _ in range(int(x))] if i%2==0 else ['.' for _ in range(int(x))] for i,x in enumerate(input_data)] part2_data = [ x for x in part2_data if x!= [] ] part1_data = [y for x in part2_data for y in x]The part1 for loop will take 10 ms.

The for loop to set up

next_empty_slot_by_lengthwill take another 10 ms.The part2 for loop will take 10 ms, too!

and adding up the part2 checksums will add another 10 ms.

So, in total, it will do it in ~60 ms, but python startup overhead seems to add 20-40 ms depending if you are on Linux(20 ms) or Windows(40 ms). both are Host, not virtual. Linux usually has faster startup time.

I am not sure where I would see a speed up. It seems that the startup overhead makes this just slower than the other top performing solutions which are also hitting a limit of 40-60 ms.

no way, someone is able to do it in O(n) time with OCaml. absolutely nutty. lol

Thank you for the link, this is crazy!

Trees are a poor mans Sets and vice versa .-.

ah well, I tried switching to python’s

set()but it was slow because of the fact it is unordered. I would need to use amin()to find the minimum index number, which was slow af. indexing might be fast butpop(0)on a list is also just as fast.(switching to deque had no speed up either) The list operations I am using are mostly O(1) timeIf I comment out this which does the adding:

# adds checksums part2_data = [y for x in part2_data for y in x] part2 = 0 for i,x in enumerate(part2_data): if x != '.': part2 += i*xso that it isolates the checksum part. it is still only 80-100ms. so the checksum part had no noticeable slowdown, even if I were to do the check sum at the same time I do the sorting it would not lower execution time.

C

First went with a doubly linked list approach, but it was quite verbose and we’re dealing with short runs (max 9) anyway so back to the flat array. Plenty fast too - on my 2015 PC:

day09 0:00.05 1648 Kb 0+179 faultsCode

#include "common.h" /* * First went with a doubly linked list approach, but it was quite verbose * and we're dealing with short runs (max 9) anyway. */ static char input[20*1000+1]; static short disk[200*1000]; int input_sz, disk_sz; static void defrag(int p2) { int a,b, a0=0, run, gap; /* * b runs back to front, finding files. * a runs front to back (from first gap, a0), finding gaps. * * For part 1 we short circuit the file length check so it deals * with single tiles. */ for (b=disk_sz-1; b > 0; b--) { /* find and measure next file from back */ for (; b>0 && !disk[b]; b--) ; for (run=1; p2 && b>0 && disk[b-1]==disk[b]; b--, run++) ; /* find the first gap */ for (; a0 < b && disk[a0]; a0++) ; /* find a gap large enough */ for (a=a0, gap=0; a<b; a++) if (!disk[a]) { for (gap=1; disk[a+gap] == disk[a]; gap++) ; if (gap >= run) break; } /* move if its */ if (gap >= run) for (; run > 0; a++, run--) { disk[a] = disk[b+run-1]; disk[b+run-1] = 0; } } } int main(int argc, char **argv) { int part, i,j; uint64_t ans[2]={}; if (argc > 1) DISCARD(freopen(argv[1], "r", stdin)); input_sz = (int)fread(input, 1, sizeof(input), stdin); assert(!ferror(stdin)); assert(feof(stdin)); for (part=0; part<2; part++) { disk_sz = 0; for (i=0; i < input_sz && isdigit(input[i]); i++) for (j=0; j < input[i]-'0'; j++) { assert(disk_sz < (int)LEN(disk)); disk[disk_sz++] = i%2 ? 0 : i/2+1; } defrag(part); for (i=0; i < disk_sz; i++) if (disk[i]) ans[part] += i * (disk[i]-1); } printf("08: %"PRIu64" %"PRIu64"\n", ans[0], ans[1]); return 0; }https://github.com/sjmulder/aoc/blob/master/2024/c/day09.c

Also did 2016 day 6 because reasons and I think it turned out real nice!

Code

#include <stdio.h> int main(int argc, char **argv) { char buf[16], p1[9]="aaaaaaaa", p2[9]="aaaaaaaa"; int counts[8][256]={}, i,j; if (argc > 1) freopen(argv[1], "r", stdin); while (fgets(buf, sizeof(buf), stdin)) for (i=0; i<8 && buf[i] >= 'a' && buf[i] <= 'z'; i++) counts[i][(int)buf[i]]++; for (i=0; i<8; i++) for (j='a'; j<='z'; j++) { if (counts[i][j] > counts[i][(int)p1[i]]) p1[i] = j; if (counts[i][j] < counts[i][(int)p2[i]]) p2[i] = j; } printf("06: %s %s\n", p1, p2);I’m also doing 2016 concurrently with this year!

Julia

Oh today was a struggle. First I did not get what exactly the task wanted me to do and then in part 2 I tried a few different ideas which all failed because I changed the disk while I was indexing into it. Finally now I reworked part 2 not moving the blocks at all, just using indexes and it works.

I feel that there is definitely something to learn here and that’s what I like about AoC so far. This is my first AoC but I hope that I won’t have to put this much thought into the rest, since I should definitely use my time differently.

Code

function readInput(inputFile::String) f = open(inputFile,"r"); diskMap::String = readline(f); close(f) disk::Vector{String} = [] id::Int = 0 for (i,c) in enumerate(diskMap) if i%2 != 0 #used space for j=1 : parse(Int,c) push!(disk,string(id)) end id += 1 else #free space for j=1 : parse(Int,c) push!(disk,".") end end end return disk end function getDiscBlocks(disk::Vector{String})::Vector{Vector{Int}} diskBlocks::Vector{Vector{Int}} = [] currBlock::Int = parse(Int,disk[1]) #-1 for free space blockLength::Int = 0; blockStartIndex::Int = 0 for (i,b) in enumerate(map(x->(x=="." ? -1 : parse(Int,x)),disk)) if b == currBlock blockLength += 1 else #b!=currBlock push!(diskBlocks,[currBlock,blockLength,blockStartIndex,i-2]) currBlock = b blockLength = 1 blockStartIndex = i-1 #start of next block end end push!(diskBlocks,[currBlock,blockLength,blockStartIndex,length(disk)-1]) return diskBlocks end function compressDisk(disk::Vector{String})::Vector{Int} #part 1 compressedDisk::Vector{Int} = [] startPtr::Int=1; endPtr::Int=length(disk) while endPtr >= startPtr while endPtr>startPtr && disk[endPtr]=="." endPtr -= 1 end while startPtr<endPtr && disk[startPtr]!="." push!(compressedDisk,parse(Int,disk[startPtr])) about AoC startPtr += 1 end push!(compressedDisk,parse(Int,disk[endPtr])) startPtr+=1;endPtr-=1 end return compressedDisk end function compressBlocks(diskBlocks::Vector{Vector{Int}}) for i=length(diskBlocks) : -1 : 1 #go through all blocks, starting from end diskBlocks[i][1] == -1 ? continue : nothing for j=1 : i-1 #look for large enough empty space diskBlocks[j][1]!=-1 || diskBlocks[j][2]<diskBlocks[i][2] ? continue : nothing #skip occupied blocks and empty blocks that are too short diskBlocks[i][3] = diskBlocks[j][3] #set start index diskBlocks[i][4] = diskBlocks[j][3]+diskBlocks[i][2]-1 #set end index diskBlocks[j][3] += diskBlocks[i][2] #move start of empty block diskBlocks[j][2] -= diskBlocks[i][2] #adjust length of empty block break end end return diskBlocks end function calcChecksum(compressedDisk::Vector{Int})::Int checksum::Int = 0 for (i,n) in enumerate(compressedDisk) checksum += n*(i-1) end return checksum end function calcChecksumBlocks(diskBlocks::Vector{Vector{Int}})::Int checksum::Int = 0 for b in diskBlocks b[1]==-1 ? continue : nothing for i=b[3] : b[4] checksum += b[1]*i end end return checksum end disk::Vector{String} = readInput("input/day09Input") @info "Part 1" println("checksum: $(calcChecksum(compressDisk(disk)))") @info "Part 2" println("checksum: $(calcChecksumBlocks(compressBlocks(getDiscBlocks(disk)))")Was really blanking on how to do this one nicely, so a bunch of stacked loops it is…

Also ended up writing two separate solutions for the first and second part, since I couldn’t get acceptable performance otherwise. Still takes half a second on my machine, mainly on the second part.This is technically the second implementation, the first one took minutes to calculate, so I wasn’t really okay with stamping it as my solution-of-choice.

Can definitely still be improved, but I’ve been poking and prodding at this code for hours on end now, so it’s long past time to let it sit for a while and see if I get any better ideas later.

C#

int[] layout = new int[0]; public void Input(IEnumerable<string> lines) { layout = string.Join("", lines).ToCharArray().Select(c => int.Parse(c.ToString())).ToArray(); } public void Part1() { ushort?[] blocks = BuildBlockmap().ToArray(); var it = 0; for (var i = blocks.Length - 1; i > it; i--) { if (blocks[i] == null) continue; while (it < blocks.Length && blocks[it] != null) ++it; if (it >= blocks.Length) break; (blocks[it], blocks[i]) = (blocks[i], null); } long checksum = 0; foreach (var part in blocks.OfType<ushort>().Select((b, i) => i * b)) checksum += part; Console.WriteLine($"Checksum: {checksum}"); } public void Part2() { var sparse = BuildSparsemap().ToList(); for (var i = sparse.Count - 1; i >= 0; i--) { if (sparse[i].Item1 == null) continue; for (var j = 0; j < i; ++j) { if (sparse[j].Item1 != null) continue; if (sparse[i].Item2 > sparse[j].Item2) continue; var size = sparse[j].Item2; size -= sparse[i].Item2; (sparse[j], sparse[i]) = (sparse[i], (null, sparse[i].Item2)); if (i + 1 < sparse.Count && sparse[i + 1].Item1 == null) { sparse[i] = (null, (ushort)(sparse[i].Item2 + sparse[i + 1].Item2)); sparse.RemoveAt(i + 1); } if (sparse[i - 1].Item1 == null) { sparse[i - 1] = (null, (ushort)(sparse[i - 1].Item2 + sparse[i].Item2)); sparse.RemoveAt(i); } if (size > 0) sparse.Insert(j + 1, (null, size)); j = i + 1; } } int ind = 0; long checksum = 0; foreach (var (val, cnt) in sparse) for (var i = 0; i < cnt; ++i) { checksum += (val ?? 0) * ind; ++ind; } Console.WriteLine($"Checksum: {checksum}"); } IEnumerable<ushort?> BuildBlockmap() { ushort blockit = 0; bool block = true; foreach (var value in layout) { for (int i = 0; i < value; ++i) yield return block ? blockit : null; if (block) blockit++; block = !block; } } IEnumerable<(ushort?, ushort)> BuildSparsemap() { ushort blockit = 0; bool block = true; foreach (var value in layout) { if (block) yield return (blockit++, (ushort)value); else yield return (null, (ushort)value); block = !block; } }Python part 1

This is working for the demo, but not for the actual data. I’m a bit lost on why.

def part1(data: data) -> None: disk_map, free = gen_disk_map(data.getlines()[0]) for f in free[:-2]: disk_map[f] = disk_map.pop(max(disk_map.keys())) print(sum([k * v for k, v in disk_map.items()])) def gen_disk_map(raw: str): file_id = 0 pos = 0 disk_map, free = {}, [] for read_index, val in enumerate(map(int, raw)): if read_index % 2 == 0: for _ in range(val): disk_map[pos] = file_id pos += 1 file_id += 1 else: free.extend(range(pos, pos + val)) pos += val return disk_map, freeThis part looks suspicious:

for f in range(len(free) - 2): disk_map[free[f]] = disk_map.pop(max(disk_map.keys()))You’re always moving exactly

len(free) - 2blocks, but that doesn’t sound to be correct in all cases. If you consider the following input:191, you only need to move one block, and not seven.I’m always moving one (file)part at a time, so that should be fine… I think.

The fact that I need [:-2] suggests that I’m doing something wrong in parsing the input I guess…

TypeScript

Actually kinda proud of my solution considering how hectic today has been! I actually didn’t spend too much time on this solution too :) Runs in ~0.5s.

Solution

import { AdventOfCodeSolutionFunction } from "./solutions"; import { MakeEmptyGenericArray } from "./utils/utils"; const pretty_print = (disk: Array<number>) => disk.reduce<string>((prev, curr) => prev + (curr == -1 ? "." : curr), ""); const checksum = (disk: Array<number>) => disk.reduce<number>((prev, curr, index) => prev + (curr == -1 ? 0 : curr * index), 0); const findSlice = (disk: Array<number>, id: number, startFrom?: number) => { const sectionStart = disk.indexOf(id, startFrom); if (sectionStart == -1) return [-1, -1]; let sectionEnd = sectionStart; while (disk.length > ++sectionEnd && disk[sectionEnd] == id); return [sectionStart, sectionEnd]; } export const solution_9: AdventOfCodeSolutionFunction = (input) => { let isFile = false; let id = 0; // make the disk const disk = input.split("").flatMap((v) => { isFile = !isFile; const count = Number(v); if (isFile) { id++; return MakeEmptyGenericArray(count, () => id - 1); } return MakeEmptyGenericArray(count, () => -1); }); // make a copy of the disk const fragmentedDisk = [...disk]; // start moving elements on the disk let start = 0 let end = fragmentedDisk.length - 1; while (start < end) { if (fragmentedDisk[start] != -1) { start++; continue; } if (fragmentedDisk[end] == -1) { end--; continue; } // swap the values fragmentedDisk[start] = fragmentedDisk[end] fragmentedDisk[end] = -1; start++; end--; } main: while (id-- > 0) { // find the section that has the file const [sectionStart, sectionEnd] = findSlice(disk, id); // this will never return -1 const sectionLength = sectionEnd - sectionStart; // find any section that can fit the file let freeStart; let freeEnd = 0; do { [freeStart, freeEnd] = findSlice(disk, -1, freeEnd); // can't find any free spaces or too far right if (freeStart == -1 || freeStart > sectionStart) continue main; } while (freeEnd - freeStart < sectionLength); // switch places let i = 0; while(sectionStart + i < sectionEnd) { disk[freeStart + i] = id; disk[sectionStart + i++] = -1; } } // calculate the checksums return { part_1: checksum(fragmentedDisk), part_2: checksum(disk), } }Haskell

Unoptimized as hell, also brute-force approach (laptops are beasts).

Spoiler

{-# LANGUAGE MultiWayIf #-} import Control.Arrow import Control.Monad.ST (ST, runST) import Data.Array.ST (STUArray) import qualified Data.List as List import qualified Data.Maybe as Maybe import qualified Data.Array.MArray as MArray toNumber '0' = 0 toNumber '1' = 1 toNumber '2' = 2 toNumber '3' = 3 toNumber '4' = 4 toNumber '5' = 5 toNumber '6' = 6 toNumber '7' = 7 toNumber '8' = 8 toNumber '9' = 9 parse :: String -> [Int] parse s = filter (/= '\n') >>> map toNumber >>> zip [0..] >>> List.concatMap (\ (index, n) -> if index `mod` 2 == 0 then replicate n (index `div` 2) else replicate n (-1)) $ s calculateChecksum :: [Int] -> Int calculateChecksum = zip [0..] >>> filter (snd >>> (/= -1)) >>> map (uncurry (*)) >>> sum moveFiles :: [Int] -> ST s Int moveFiles bs = do let bLength = length bs marray <- MArray.newListArray (1, bLength) bs moveFiles' marray 1 bLength elems <- MArray.getElems marray return $ calculateChecksum elems moveFiles' :: STUArray s Int Int -> Int -> Int -> ST s () moveFiles' a start stop | start == stop = return () | otherwise = do stopBlock <- MArray.readArray a stop if stopBlock == -1 then moveFiles' a start (pred stop) else do startBlock <- MArray.readArray a start if startBlock == -1 then do MArray.writeArray a start stopBlock MArray.writeArray a stop (-1) moveFiles' a (succ start) (pred stop) else moveFiles' a (succ start) stop countConsecutive :: STUArray s Int Int -> Int -> Int -> ST s Int countConsecutive a i step = do block <- MArray.readArray a i let nextI = i + step bounds <- MArray.getBounds a if | MArray.inRange bounds nextI -> do nextBlock <- MArray.readArray a nextI if nextBlock == block then do steps <- countConsecutive a nextI step return $ 1 + steps else return 1 | otherwise -> return 1 findEmpty :: STUArray s Int Int -> Int -> Int -> Int -> ST s (Maybe Int) findEmpty a i l s = do block <- MArray.readArray a i blockLength <- countConsecutive a i 1 let nextI = i + blockLength bounds <- MArray.getBounds a let nextInBounds = MArray.inRange bounds nextI if | i >= s -> return $! Nothing | block == -1 && blockLength >= l -> return $ Just i | block /= -1 && nextInBounds -> findEmpty a nextI l s | blockLength <= l && nextInBounds -> findEmpty a nextI l s | not nextInBounds -> return $! Nothing moveDefragmenting :: [Int] -> ST s Int moveDefragmenting bs = do let bLength = length bs marray <- MArray.newListArray (1, bLength) bs moveDefragmenting' marray bLength elems <- MArray.getElems marray return $ calculateChecksum elems moveDefragmenting' :: STUArray s Int Int -> Int -> ST s () moveDefragmenting' a 1 = return () moveDefragmenting' a stop | otherwise = do stopBlock <- MArray.readArray a stop stopLength <- countConsecutive a stop (-1) targetBlock <- findEmpty a 1 stopLength stop elems <- MArray.getElems a let nextStop = stop - stopLength bounds <- MArray.getBounds a let nextStopInRange = MArray.inRange bounds nextStop if | stopBlock == -1 -> moveDefragmenting' a nextStop | Maybe.isJust targetBlock -> do let target = Maybe.fromJust targetBlock mapM_ (\ o -> MArray.writeArray a (stop - o) (-1)) [0..stopLength - 1] mapM_ (\ o -> MArray.writeArray a (target + o) stopBlock) [0..stopLength - 1] if nextStopInRange then moveDefragmenting' a nextStop else return () | nextStopInRange -> moveDefragmenting' a nextStop | otherwise -> return () part1 bs = runST $ moveFiles bs part2 bs = runST $ moveDefragmenting bs main = getContents >>= print . (part1 &&& part2) . parseC#

public class Day09 : Solver { private string data; public void Presolve(string input) { data = input.Trim(); } public string SolveFirst() { var arr = new List<int>(); bool file = true; int file_id = 0; foreach (var ch in data) { if (file) { Enumerable.Range(0, ch - '0').ToList().ForEach(_ => arr.Add(file_id)); file_id++; } else { Enumerable.Range(0, ch - '0').ToList().ForEach(_ => arr.Add(-1)); } file = !file; } int from_ptr = arr.Count - 1; int to_ptr = 0; while (from_ptr > to_ptr) { if (arr[to_ptr] != -1) { to_ptr++; continue; } if (arr[from_ptr] == -1) { from_ptr--; continue; } arr[to_ptr] = arr[from_ptr]; arr[from_ptr] = -1; to_ptr++; from_ptr--; } return Enumerable.Range(0, arr.Count) .Select(block_id => arr[block_id] > 0 ? ((long)arr[block_id]) * block_id : 0) .Sum().ToString(); } public string SolveSecond() { var files = new List<(int Start, int Size, int Id)>(); bool is_file = true; int file_id = 0; int block_id = 0; foreach (var ch in data) { if (is_file) { files.Add((block_id, ch - '0', file_id)); file_id++; } is_file = !is_file; block_id += (ch - '0'); } while (true) { bool moved = false; for (int from_ptr = files.Count - 1; from_ptr >= 1; from_ptr--) { var file = files[from_ptr]; if (file.Id >= file_id) continue; file_id = file.Id; for (int to_ptr = 0; to_ptr < from_ptr; to_ptr++) { if (files[to_ptr + 1].Start - files[to_ptr].Start - files[to_ptr].Size >= file.Size) { files.RemoveAt(from_ptr); files.Insert(to_ptr + 1, file with { Start = files[to_ptr].Start + files[to_ptr].Size }); moved = true; break; } } if (moved) break; } if (!moved) break; } return files.Select(file => ((long)file.Id) * file.Size * (2 * ((long)file.Start) + file.Size - 1) / 2) .Sum().ToString(); } }Nim

Wrote ugly-ass code today, but it was surprisingly easy to debug and fast.

Solution:

Part 1: Parse data into a sequence of blocks and empty space like in example (I use-1for empty space) and two indexes. First index goes 0 -> end, second index starts at the end. When we encounter empty space -> we use value from second index and decrement it (while skipping empty spaces). Repeat until both indexes meet at some point.Part 2: Parse data into sequence of block objects and try to insert each data block into each empty space block before it. Somehow it all just worked without too many bugs.

Runtime (final version): 123 ms

type BlockKind = enum Data, Space Block = object size: int case kind: BlockKind of Data: index: int of Space: discard func parseBlocks(input: string): tuple[blocks: seq[Block], id: int] = for i, c in input: let digit = c.ord - '0'.ord if i mod 2 == 0: result.blocks.add Block(kind: Data, size: digit, index: result.id) if i < input.high: inc result.id else: result.blocks.add Block(kind: Space, size: digit) proc solve(input: string): AOCSolution[int, int] = block p1: var memBlocks = newSeqOfCap[int](100_000) var indBlock = 0 for i, c in input: let digit = c.ord - '0'.ord if i mod 2 == 0: memBlocks.add (indBlock).repeat(digit) inc indBlock else: memBlocks.add -1.repeat(digit) var ind = 0 var revInd = memBlocks.high while ind <= revInd: if memBlocks[ind] == -1: while memBlocks[revInd] == -1: dec revInd result.part1 += ind * memBlocks[revInd] dec revInd else: result.part1 += ind * memBlocks[ind] inc ind block p2: var (memBlocks, index) = parseBlocks(input) var revInd = memBlocks.high while revInd > 0: doAssert memBlocks[revInd].kind == Data var spaceInd = -1 let blockSize = memBlocks[revInd].size for ind in 0..revInd: if memBlocks[ind].kind == Space and memBlocks[ind].size >= blockSize: spaceInd = ind; break if spaceInd != -1: let bSize = memBlocks[revInd].size let diffSize = memBlocks[spaceInd].size - bSize swap(memBlocks[spaceInd], memBlocks[revInd]) if diffSize != 0: memBlocks[revInd].size = bSize memBlocks.insert(Block(kind: Space, size: diffSize), spaceInd + 1) inc revInd # shift index bc we added object dec index # skip space blocks and data blocks with higher index while (dec revInd; revInd < 0 or memBlocks[revInd].kind != Data or memBlocks[revInd].index != index): discard var unitIndex = 0 for b in memBlocks: case b.kind of Data: for _ in 1..b.size: result.part2 += unitIndex * b.index inc unitIndex of Space: unitIndex += b.size

Rust

A bunch of fiddling with indices and sizes. In part 1 the disk is simulated in a Vec, for part 2 files and spaces are represented by their offset and size, collected in separate lists. Then these values are changed as necessary, with a whole bunch of

mut. In particular, files are never moved within the list of files, only their offset changes.Solution

fn part1(input: String) { let mut id: u64 = 0; let mut disk = Vec::new(); let mut file = true; for b in input.trim().bytes() { let num: usize = (b - b'0') as usize; if file { disk.extend(vec![Some(id); num]); id += 1; } else { disk.extend(vec![None; num]); } file = !file; } let mut first_free = 0; while disk[first_free].is_some() { first_free += 1 } let mut last_file = disk.len() - 1; while disk[last_file].is_none() { last_file -= 1 } while first_free < last_file { disk[first_free] = disk[last_file]; disk[last_file] = None; while disk[first_free].is_some() { first_free += 1 } while disk[last_file].is_none() { last_file -= 1 } } let checksum = disk .iter() .filter_map(|e| *e) .enumerate() .map(|(i, id)| i as u64 * id) .sum::<u64>(); println!("{checksum}"); } fn part2(input: String) { // Tuples of (idx, size) let mut free_spaces = Vec::new(); // Tuples of (idx, size, id) let mut files = Vec::new(); let mut id: u64 = 0; let mut disk_len = 0; let mut file = true; for b in input.trim().bytes() { let num = (b - b'0') as u64; if file { files.push((disk_len, num, id)); id += 1; } else { free_spaces.push((disk_len, num)); } disk_len += num; file = !file; } for (idx, size, _id) in files.iter_mut().rev() { match free_spaces .iter_mut() // Only search spaces left of file .take_while(|(sp_idx, _space)| sp_idx < idx) .find(|(_sp_idx, space)| space >= size) { None => {} // No space found Some((sp_idx, space)) => { // Move file into space *idx = *sp_idx; // Reduce free space *sp_idx += *size; *space -= *size; } } } let sum_range = |n| if n == 0 { 0 } else { (n * (n - 1)) / 2 }; let checksum = files .iter() .map(|(idx, size, id)| (sum_range(idx + size) - sum_range(*idx)) * id) .sum::<u64>(); println!("{checksum}"); } util::aoc_main!();Also on github

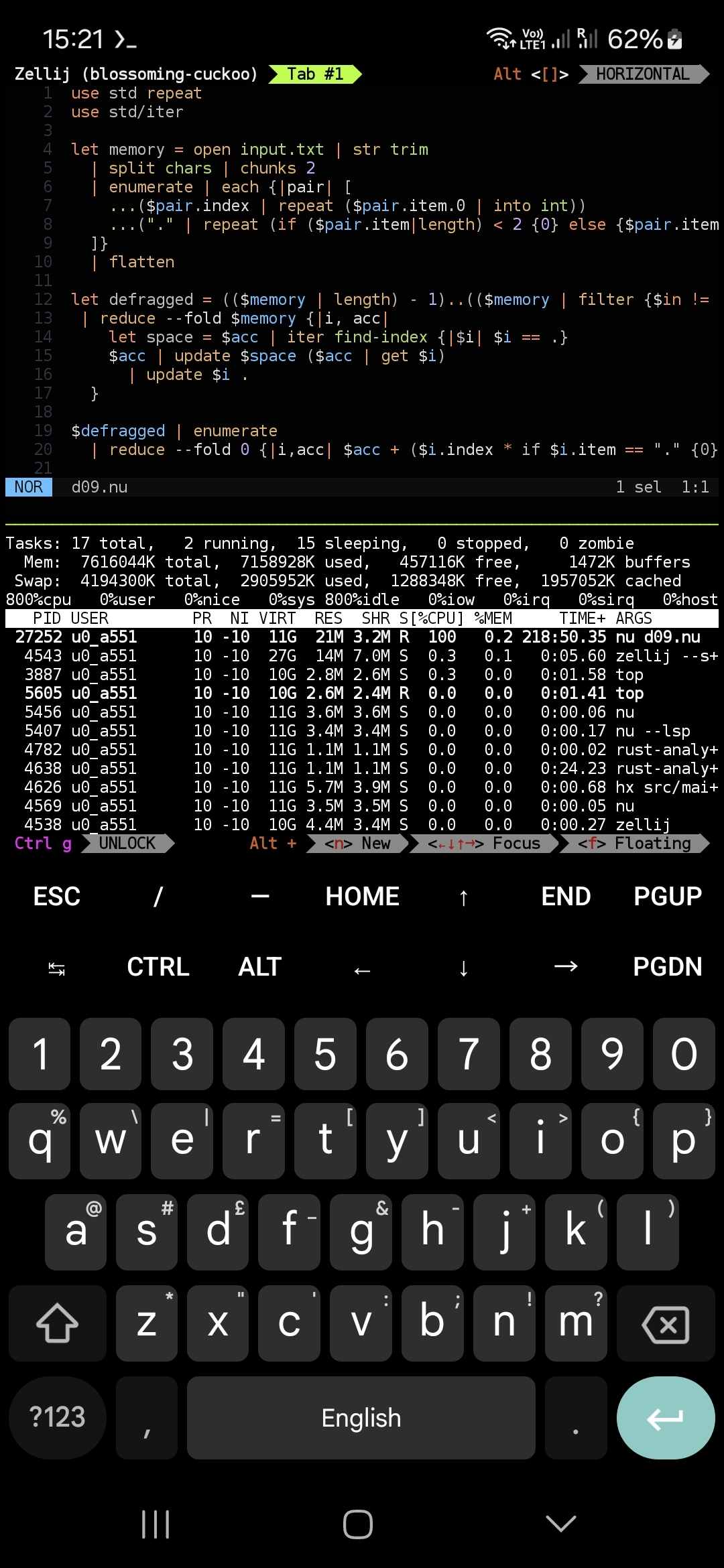

Nushell

As I’m still on holiday and normal languages are a PITA to type on a phone, I though I’d try a compiled scripting language. I’m not very familiar with it so it took longer to code and also it’s been running the first reduce step for 35 minutes so I’ve missed the 24h cutoff 😔

use std repeat use std/iter let memory = open input.txt | str trim | split chars | chunks 2 | enumerate | each {|pair| [ ...($pair.index | repeat ($pair.item.0 | into int)) ...("." | repeat (if ($pair.item|length) < 2 {0} else {$pair.item.1 | into int})) ]} | flatten let defragged = (($memory | length) - 1)..(($memory | filter {$in != .} | length)) | reduce --fold $memory {|i, acc| let space = $acc | iter find-index {|$i| $i == .} $acc | update $space ($acc | get $i) | update $i . } $defragged | enumerate | reduce --fold 0 {|i,acc| $acc + ($i.index * if $i.item == "." {0} else {$i.item | into int})}You coded this on a phone, with a touchscreen keyboard? Not sure who is more impressive, you or the unicode wizard :D

I just ran this on a laptop - it worked (part one only) but took 4h28m21s so Nushell is not a language for AoC (or I just coded it very poorly).

I think once your runtime hits 4 hours, the minutes and seconds stop being relevant :D

Is it 4hrs of 100% CPU?

One core was busier, but it wasn’t at 100%. My Rust code yesterday was the same, perhaps it’s taking too much time accessing memory.

The time was wall time (as per Starship’s output) but it was still waaay too slow to bother with again!

deleted by creator

I’ll buy “better than default on screen keyboard”, but I doubt it’ll be better than a physical keyboard. Would be nice if there was a virtual keyboard that let you easily modify the layout. I keep hitting

.instead of space on my phone.deleted by creator

Haha, thanks but we both know they’re #1 by a country mile. I think my phone’s now downclocking as it’s burning up and still hasn’t spat out an answer after two hours, so I technically haven’t completed it yet!

Edit: Calling it for now, I might figure out later why it’s so slow (there’s some easy but minor gains to be made but I’m guessing there’s some O(awful) going on that the input’s blown up)

Rust

Pretty poor performance on part 2, was initially 10s, got down to 2.5s, but still seems pretty poor.

#[cfg(test)] mod tests { fn checksum(p0: &[i64]) -> i64 { let mut csum = 0; for (i, val) in p0.iter().enumerate() { if *val == -1 { continue; } csum += *val * (i as i64); } csum } fn defrag(p0: &[i64]) -> Vec<i64> { let mut start = 0; let mut end = p0.len() - 1; let mut defragged = vec![]; while start != end + 1 { if p0[start] != -1 { defragged.push(p0[start]); start += 1; continue; } if p0[start] == -1 { defragged.push(p0[end]); start += 1; end -= 1; while p0[end] == -1 { end -= 1; } } } defragged } fn expand_disk(p0: &str) -> Vec<i64> { let mut disk = vec![]; let mut file_index = 0; let mut is_file = true; for char in p0.chars() { let val = char.to_digit(10).unwrap(); if is_file { for _ in 0..val { disk.push(file_index) } file_index += 1; } else { for _ in 0..val { disk.push(-1) } } is_file = !is_file; } disk } #[test] fn day9_part1_test() { let input: String = std::fs::read_to_string("src/input/day_9.txt") .unwrap() .trim() .into(); let disk: Vec<i64> = expand_disk(&input); let defraged = defrag(&disk); let checksum = checksum(&defraged); println!("{}", checksum); } fn find_file(p0: &[i64], file: i64) -> (usize, usize) { let mut count = 0; let mut i = p0.len() - 1; while i > 0 && p0[i] != file { i -= 1; } // At end of file while i > 0 && p0[i] == file { count += 1; i -= 1; } (i + 1, count) } fn find_slot(p0: &[i64], size: usize, end: usize) -> Option<usize> { let mut i = 0; while i < end { while p0[i] != -1 && i < end { i += 1; } let mut count = 0; while p0[i] == -1 && i < end { i += 1; count += 1; if count == size { return Some(i - count); } } } None } fn move_file(p0: &mut [i64], file: i64, size: usize, old_pos: usize, new_pos: usize) { for i in 0..size { p0[old_pos + i] = -1; p0[new_pos + i] = file; } } fn defrag2(p0: &mut [i64]) { let mut i = *p0.last().unwrap(); while i > 0 { let (old_pos, size) = find_file(p0, i); match find_slot(p0, size, old_pos) { None => {} Some(new_pos) => { if new_pos < old_pos { move_file(p0, i, size, old_pos, new_pos); } } } i -= 1; } } #[test] fn day9_part2_test() { let input: String = std::fs::read_to_string("src/input/day_9.txt") .unwrap() .trim() .into(); let mut disk: Vec<i64> = expand_disk(&input); defrag2(&mut disk); let checksum = checksum(&disk); println!("{}", checksum); } }Found a cheaty way to get sub 1s:

fn defrag2(p0: &mut [i64]) { let mut i = *p0.last().unwrap(); while i > 3000 { // Stop defragging here, probably cant find free spots after this point let (old_pos, size) = find_file(p0, i); if let Some(new_pos) = find_slot(p0, size, old_pos) { move_file(p0, i, size, old_pos, new_pos); } i -= 1; } }Might be possible to correctly do this in the find_slot code, so that if it fails to find a slot, it never searches for that size again.

edit:

fn defrag2(p0: &mut [i64]) { let mut i = *p0.last().unwrap(); let mut max_size = 10; while i > 0 { let (old_pos, size) = find_file(p0, i); if size <= max_size { if let Some(new_pos) = find_slot(p0, size, old_pos) { move_file(p0, i, size, old_pos, new_pos); } else { max_size = size - 1; } } if max_size == 0 { return; } i -= 1; } }500ms, I can go to sleep now.

haha, kept going at it, got it down to 4ms, by tracking where the searches ended, and starting from there next time.

Definitely done now :D